For more information or if you need help retrieving your data, please contact Weights & Biases Customer Support at support@wandb.com

AI agent observability is the practice of collecting, analysing, and acting on rich telemetry from AI agents so that every reasoning step, tool call, and decision is transparent, debuggable, and auditable. As multi-agent systems move from demos into production, handling financial workflows, strategy generation, risk management, and portfolio optimisation, the risk of silent failures, hallucinations, and compliance violations grows dramatically.

This guide covers what AI agent observability is, how it differs from traditional application monitoring, the five pillars that make it work, and how to implement it in practice using W&B Weave.

Let’s begin by level-setting, by answering the question:

AI agent observability is the practice of tracking and analyzing the internal reasoning, tool usage, and decisions of autonomous AI agents in real-time. It provides deep visibility into the “black box” of LLM-based systems, enabling developers to debug, evaluate performance, and ensure safety throughout the agent’s lifecycle.

Building a demo agent is easy. You string together a few prompts, hook up a tool or two, and watch the agent perform. But taking that agent from a local notebook to a production environment handling sensitive financial workflows is a different challenge entirely.

In the world of autonomous agents, especially multi-agent systems that make decisions, execute trades, or handle portfolio risk, the “black box” nature of LLMs becomes a liability. When an agent hallucinates a risk factor or fails to call a tool correctly, you can’t rely on CPU usage or HTTP 500 error rates to figure out why.

Unlike traditional APM (Application Performance Monitoring), AI agent observability isn’t only about system health. It’s about understanding the reasoning behind the machine’s decisions. Concretely, it means collecting:

When an AI agent is observable, every step it takes, from the prompts it generates to the external API calls it makes, can be tracked and analysed. This level of insight is crucial for optimising performance, debugging failures, and building trustworthy, compliant AI systems.

If you come from a traditional DevOps background, you’re used to asking: “Is the service healthy?” You look at latency, memory pressure, and request throughput.

AI agents introduce a fundamentally different layer of complexity. They are non-deterministic and context-heavy. A request might succeed technically (return a 200 OK status) but fail catastrophically in practice, for example, recommending a high-risk trade for a conservative portfolio.

Agent observability forces us to ask different questions:

In a multi-agent system, this complexity compounds. An Ingestion Agent passes data to a Features Agent, which hands it off to a Strategy Agent, which then feeds it to a Risk Agent. If the Risk Agent fails, the root cause might actually be a subtle data drift in the Ingestion Agent three steps upstream. Without deep observability, you’re flying blind. With it, you get a navigable trace of the entire thought process.

Traditional observability was designed for deterministic software, focusing on infrastructure health through CPU usage, memory, network statistics, and linear request-response traces. The failure modes are usually crashes or timeouts.

Agent observability extends this paradigm in several important ways:

| Dimension | Traditional Observability | Agent Observability |

|---|---|---|

| Primary focus | Infrastructure health | Reasoning, tool usage, behaviour |

| Telemetry | HTTP requests, DB queries | Prompts, responses, intermediate “thoughts,” evaluation scores |

| Flow structure | Linear request-response | Hierarchical, branched, often with loops or retries |

| Key KPIs | Throughput, error rates | Token usage, hallucination rates, semantic drift, task success |

| Failure modes | Crashes, timeouts | Fluent but wrong answers, policy violations, stale context |

The same prompt can produce different outputs based on retrieved documents, tool responses, and prior conversation history. Therefore, observability must track the exact state and inputs at every step, not just “request took 230ms.”

The complexity multiplies in multi-agent systems built with frameworks like CrewAI, LangGraph, or custom orchestration. These systems involve inter-agent communication, delegation, and shared state.

Consider a finance agent stack:

Without observability, diagnosing a failure is nearly impossible. You might know a trade was bad, but you won’t know if the Strategy Agent hallucinated or if the Ingestion Agent provided stale data hours earlier.

With a tool like W&B Weave, you get a single, navigable trace of the entire workflow. You can see the hand-offs between agents, identify exactly where an invariant was broken, and pinpoint where a hallucination originated.

To effectively observe these systems, we need to move beyond simple logging. Agent observability is structured into 4 distinct pillars: Monitoring, Tracing, Evaluation, and Governance.

We track how long each part of a process takes, including retrieving information, reasoning, and generating results, looking at typical response times, slower responses, and the rare slowest ones. Getting the first token back quickly is especially important for a smooth user experience.

Resource KPIs are equally important. We track token usage (prompt vs. completion) by agent and by step to manage costs. We also monitor reliability through task success rates and tool call success rates, distinguishing between LLM errors, tool errors, and infrastructure failures.

In Weave, much of this data is captured automatically through tracing spans. Every function decorated with @weave.op() records timing and metadata that feeds into monitoring dashboards.

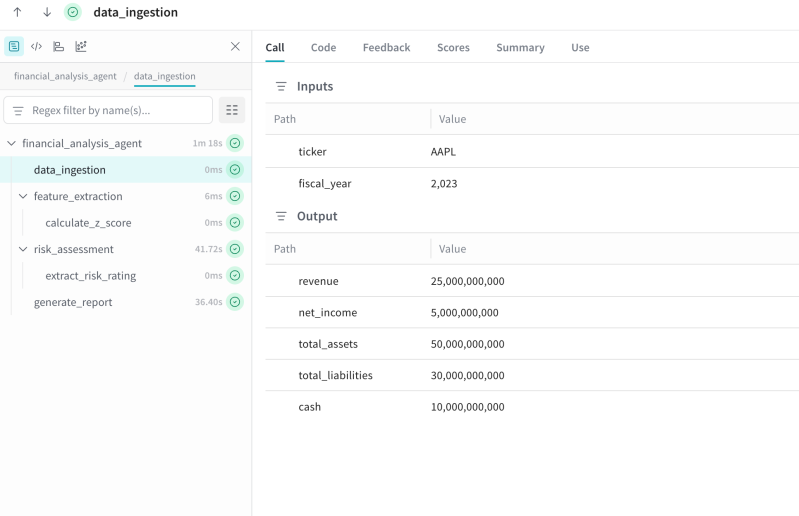

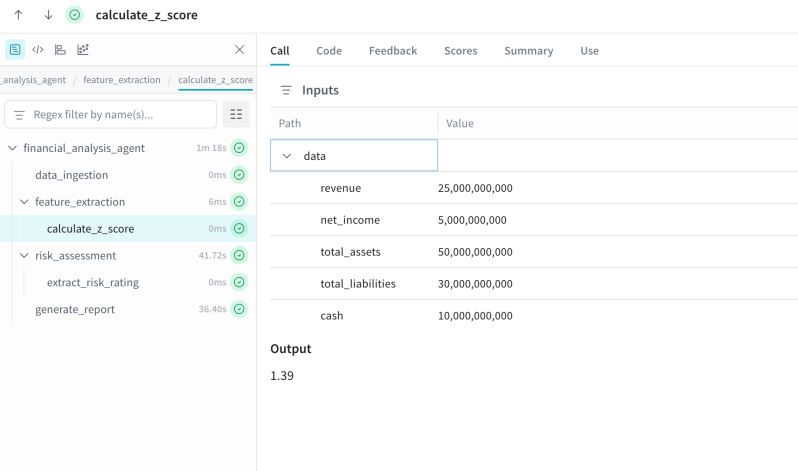

Tracing is the backbone of agent observability. For a multi-agent pipeline, a trace is not a flat list of logs but a hierarchical tree. A root span, perhaps named financial_analysis_agent , contains child spans for data_ingestion, feature_extraction, risk_assessment, and generate_report.

Each span captures the inputs and outputs (sanitised for security), the duration, and metadata about the model and tools used. Custom attributes are vital: tagging a span with ticker, user_id, or strategy_id allows you to slice the data later.

In Weave, this is achieved by decorating Python functions with @weave.op(), which automatically constructs this hierarchy:

@weave.op()

def analyze_company(ticker: str, fiscal_year: int) -> dict:

financials = fetch_financial_data(ticker, fiscal_year)

ratios = calculate_financial_ratios(financials)

risk = assess_credit_risk(ticker, ratios)

summary = generate_executive_summary(ticker, ratios, risk)

return {"ticker": ticker, "ratios": ratios, "risk": risk, "summary": summary}When analyze_company calls fetch_financial_data, and that function is also decorated with @weave.op(), Weave automatically nests it as a child span. The result is a complete, inspectable trace tree , no manual span management required.

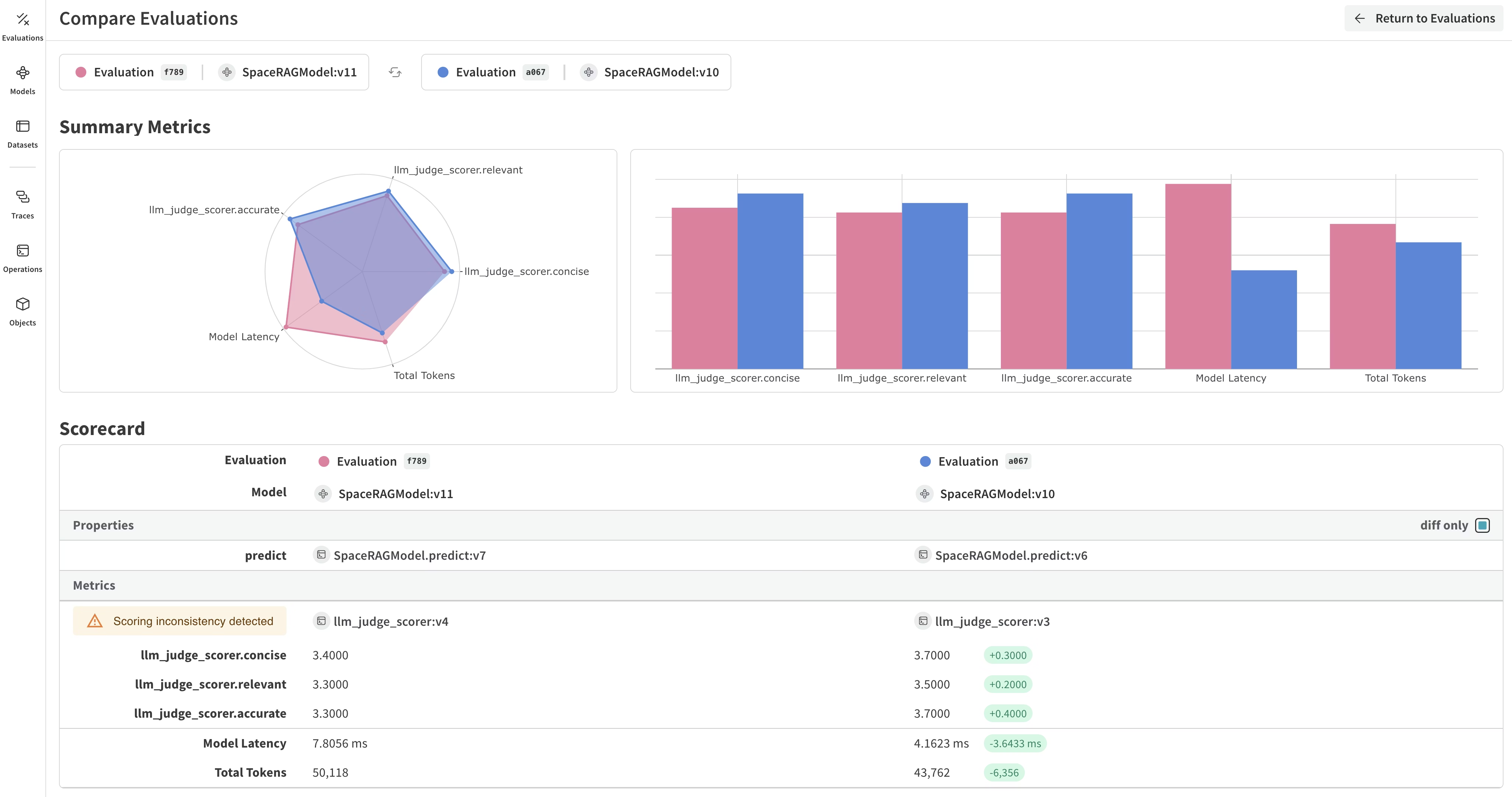

This is perhaps the biggest differentiator from standard software observability. Monitoring tells you if the agent is running; evaluation tells you if it is correct.

Evaluations fall into three categories:

Weave supports this workflow by managing Datasets (test cases), Scorers (functions that judge output), and Evaluations (runs that apply scorers to datasets). This means you can run regression suites on every prompt change and track quality over time, not just at launch.

For regulated industries like finance, governance is the audit trail. You need to know exactly which version of the prompt, which model snapshot, and which code configuration led to a specific financial decision.

Governance ties observability to compliance. It requires tight coupling between your code versions, model versions, and dataset versions. A full audit trail answers: Who changed the prompt? When was it changed? How did that change affect the agent’s behaviour? This allows you to reconstruct past decisions, a requirement that is indispensable for GDPR, CCPA, EU AI Act, and financial regulations.

Observability inherently deals with what agents “see” and “say,” which means it touches sensitive data, raw user queries, retrieved contracts, financial records.

The “log everything” approach is dangerous. Best practices include:

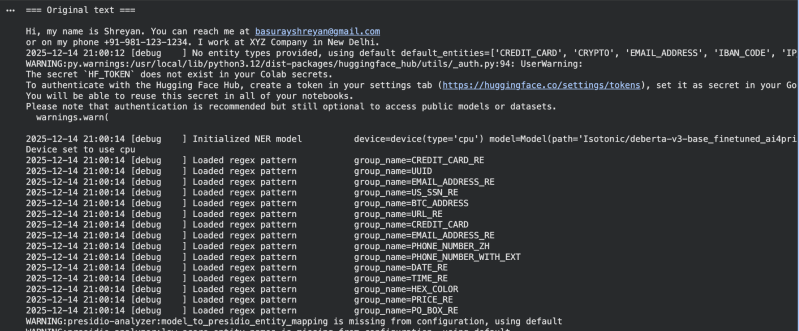

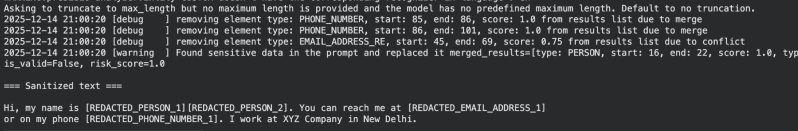

For example, using a library like llm-guard, you can create a PII anonymisation helper:

from llm_guard.vault import Vault

from llm_guard.input_scanners import Anonymize

vault = Vault()

def sanitize_text(text: str) -> str:

scanner = Anonymize(vault, language="en")

sanitized, is_valid, risk_score = scanner.scan(text)

return sanitizedThe scanner replaces PII with placeholders like [REDACTED_PERSON_1] and [REDACTED_EMAIL_ADDRESS_1], while the vault stores originals for later de-anonymisation if needed. The structure and meaning of the text remain intact for debugging purposes.

One of the most important developments in agent observability is the convergence on OpenTelemetry (OTEL) as a common standard. OpenTelemetry’s GenAI Special Interest Group is actively defining semantic conventions for AI agent telemetry, standardised attributes like ai.agent.id, ai.agent.tool, and ai.model.name that any compliant monitoring tool can interpret.

This matters because it prevents vendor lock-in. If your agents emit traces using OpenTelemetry conventions, those traces can flow into any compatible backend.

If you already have agents instrumented with OpenTelemetry (perhaps a OpenAI agent or an Amazon Bedrock agent using AWS Distro for OTEL), you can route those traces directly to Weave.

Once configured, any OTEL trace data your agent emits will appear in your Weave project alongside traces from @weave.op()-decorated functions. This means you can aggregate traces from multiple sources, different frameworks, different languages, different services, into a single, unified view.

The standardisation effort is still evolving, but the direction is clear: whether you’re using a commercial tool or an open-source logger, adherence to OpenTelemetry’s GenAI conventions will make them interoperable.

Don’t treat observability as an afterthought. Treat @weave.op() as the default way to write any agent function. Design your workflows so that every logical step, ingestion, reasoning, formatting, is a separate, traceable unit. Use consistent naming conventions (e.g., verb_noun like calculate_risk, fetch_data) to make traces easy to navigate.

Observability should shift left into your CI/CD pipeline. Every Pull Request should trigger a small evaluation suite. If the metrics regress, for example, a new prompt causes a 5% drop in accuracy, the PR should be blocked. Larger evaluation suites can run nightly. This treats “quality” as an observable metric just like “latency.”

Over-logging: It’s easy to log so much that dashboards become noisy and costs explode. Define a logging budget. Use sampling , log 100% of failures but only a fraction of successful requests.

Under-logging: Conversely, “flying blind” occurs when key steps lack instrumentation. Enforce a rule that every external tool call and every error must be traced.

Vanity metrics: Metrics like “total requests” look good on a slide but don’t tell you if users are happy. Focus on outcome metrics: task success rates, risk limit breaches, user feedback scores.

Broken traces: In multi-agent systems, traces often break when context isn’t passed correctly between services. Use standard correlation IDs or OpenTelemetry context propagation to stitch together the full story.

Ignoring evals: Monitoring without evaluation is like watching a plane’s instruments without knowing where it should be flying. Build evals into the pipeline from day one.

AI agent observability has shifted from “nice-to-have” to a foundational requirement for any production-grade multi-agent system, especially in domains like finance where agents touch capital, customer data, and regulatory obligations in every decision.

By moving beyond traditional APM and infrastructure metrics, observability reframes the core question from “Is the service up?” to “Is the agent thinking correctly, with the right data, under the right constraints?” Answering that question requires treating prompts, intermediate reasoning, tool calls, and final outputs as first-class telemetry.

Across the five pillars, Monitoring, Tracing, Logging, Evaluation, and Governance, we now have both a mental model and a working implementation for making agent behaviour transparent. The financial analysis pipeline demonstrated how decorating each agent step with @weave.op() turns a standard Python script into a fully traceable multi-agent workflow, with every call visible as a span that can be inspected, filtered, and replayed.

From here, the path forward is clear: extend this pattern to real data sources, plug in continuous eval suites for regression testing, layer in guardrails and human review, and scale from a single notebook to an enterprise-grade, multi-agent stack. Tools like W&B Weave make this practical today, while the OpenTelemetry standardisation effort ensures your instrumentation won’t lock you into a single vendor tomorrow.

In a world of non-deterministic, tool-calling agents, observability is not just instrumentation, it’s how reliability, safety, and trust are engineered into AI systems from day one.