For more information or if you need help retrieving your data, please contact Weights & Biases Customer Support at support@wandb.com

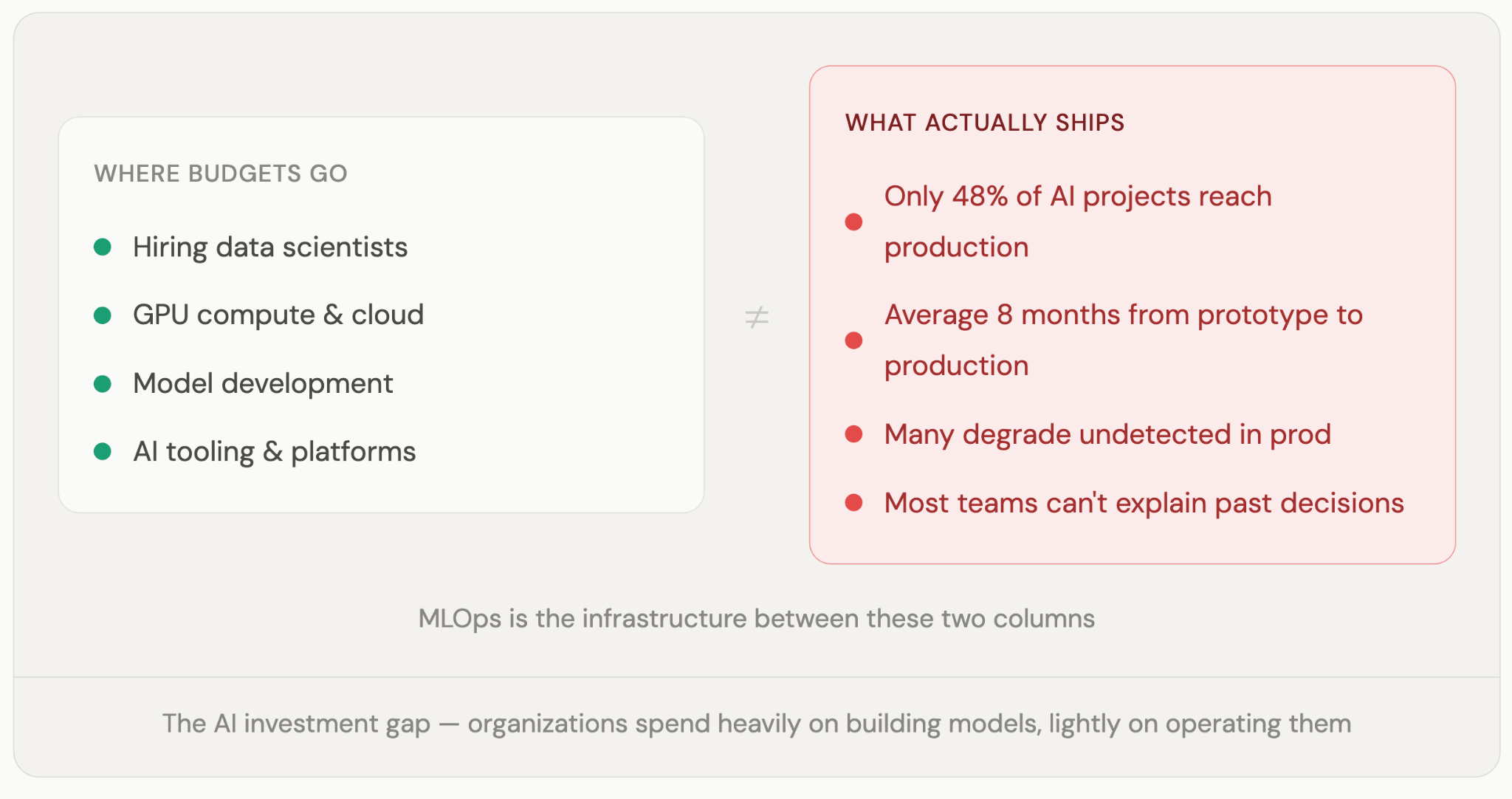

Most AI investments don’t underperform because the models are bad. They underperform because the infrastructure to support them was never built.

Many organizations have spent years funding AI, but find the models still aren’t working the way anyone hoped.

The pattern is familiar. Budgets were approved. Talented teams were hired. Initiatives launched. A few made it to production, and those are now quietly degrading. No one has flagged it yet.

The models weren’t the problem. The systems around them were.

That’s the gap MLOps closes.

This guide explains what MLOps is, why it’s a business problem as much as a technical one, and how platforms like Weights & Biases give organizations the visibility and control they need to turn AI investments into AI outcomes. It also covers how MLOps fits alongside DevOps and LLMOps, and what a practical strategy looks like for leaders who need their AI programs to start delivering.

Most executives who’ve invested seriously in machine learning know the feeling: the model demo was impressive, the AI team is talented, and yet somehow the project still isn’t in production six months later. Or it is in production, and nobody’s quite sure if it’s still working right. Both situations are more common than anyone likes to admit, and both have the same root cause.

MLOps is the answer to that root cause. It’s the operational backbone that determines whether your AI investments deliver consistent value or generate models that work in notebooks and nowhere else, and questionable ROI. The organizations running fifty governed, monitored models in production aren’t luckier or more talented than the ones running five fragile pilots. They just invested earlier in the systems that make machine learning repeatable.

As regulatory pressure grows, from the EU AI Act to sector-specific requirements in financial services and healthcare, MLOps is also becoming the difference between organizations that can demonstrate accountability for their models and those that discover, mid-audit, that they cannot. Done right, it shortens deployment cycles, reduces operational risk, and gives leadership the visibility to make real decisions. Done wrong, or not done at all, you’re running blind.

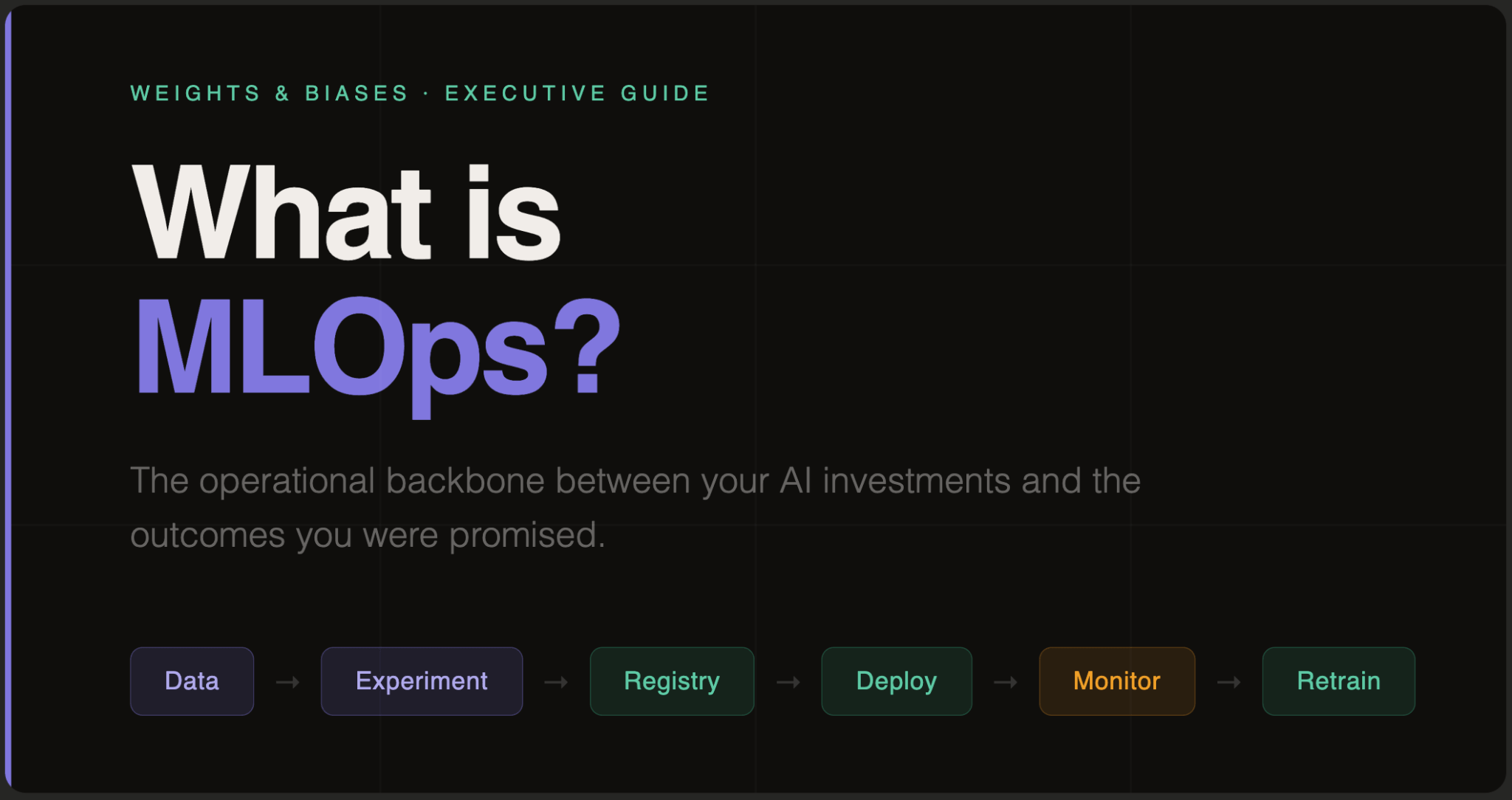

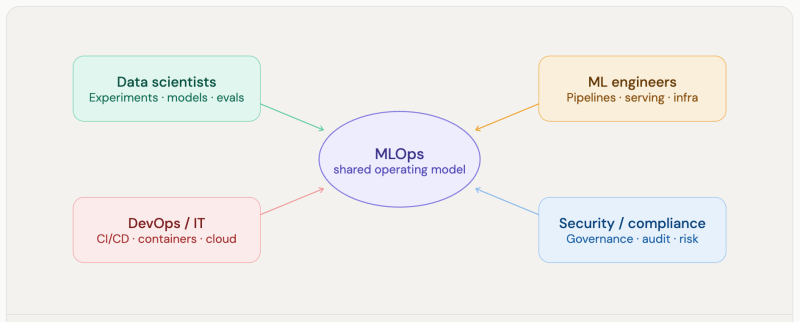

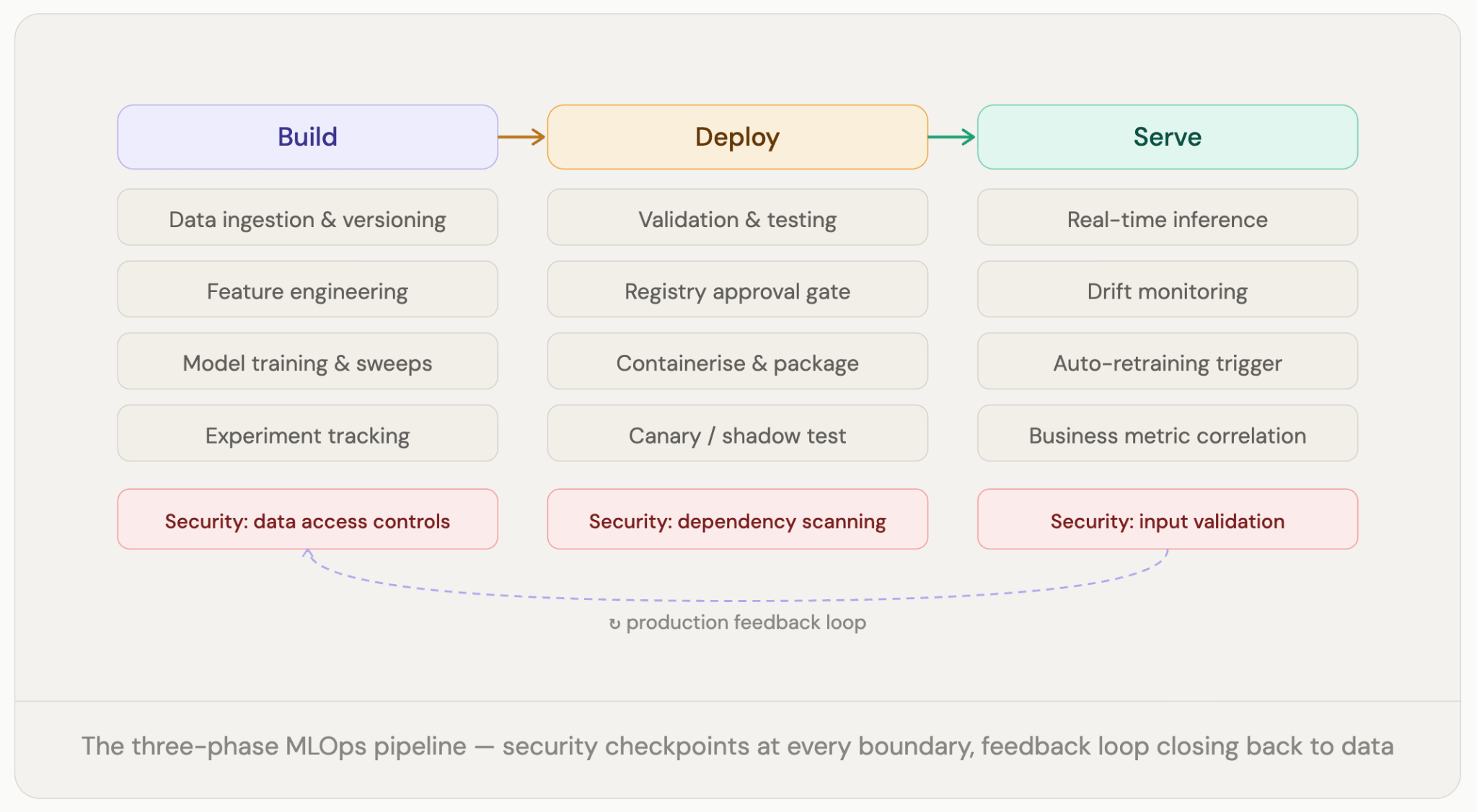

MLOps (machine learning operations) is the engineering discipline that keeps ML models working reliably in production. It combines machine learning, DevOps, and data engineering to automate the end-to-end lifecycle of models, from build to deployment, monitoring, and maintenance.

The core components are straightforward: version control for data and models, CI/CD pipelines for testing and deployment, monitoring for accuracy and data drift, and governance frameworks for compliance and auditability. Tools like Weights & Biases handle experiment tracking and model registry; platforms like Kubeflow and GitHub Actions manage pipeline automation.

The business case is equally straightforward. MLOps reduces the time it takes to move from experimentation to production, keeps models performing as intended after they ship, and enables management of hundreds and even thousands of models without losing visibility into any of them.

But here’s what the definitions usually leave out.

A model isn’t done when a machine learning engineer declares it done. It’s done when it’s versioned, tested, deployed to infrastructure it’s never run on before, monitored against data it’s never seen, governed for compliance with regulations that may not have existed when it was built, and recoverable in under an hour when something breaks.

That’s not machine learning or data science, that’s operations. And many organizations are trying to do it without the infrastructure that operations require.

Here’s what “models degrade silently” actually looks like in practice.

A bank trains a fraud detection model in January on twelve months of transaction data. It performs well on validation: 94% accuracy, low false-positive rate. It goes live in Q2. Through spring and summer, consumer behavior shifts in ways nobody explicitly tracks: a new category of Buy Now Pay Later merchants, slightly different timing patterns driven by economic pressure, and a cohort of customers who changed jobs and whose spending looks different now. Nothing dramatic. The model’s accuracy on its original test set is still fine. But its accuracy on real fraud is quietly declining, and nobody has the monitoring in place to see it.

By Q4, chargebacks have ticked up. The fraud team assumes it’s seasonal. It isn’t. The model is eleven points less accurate than it was in January, and the bank spent nine months not knowing.

This is not an edge case. It’s the default outcome when machine learning goes to production without continuous monitoring. Data changes. Models don’t. Without MLOps, the gap between them widens in silence until something expensive makes it visible.

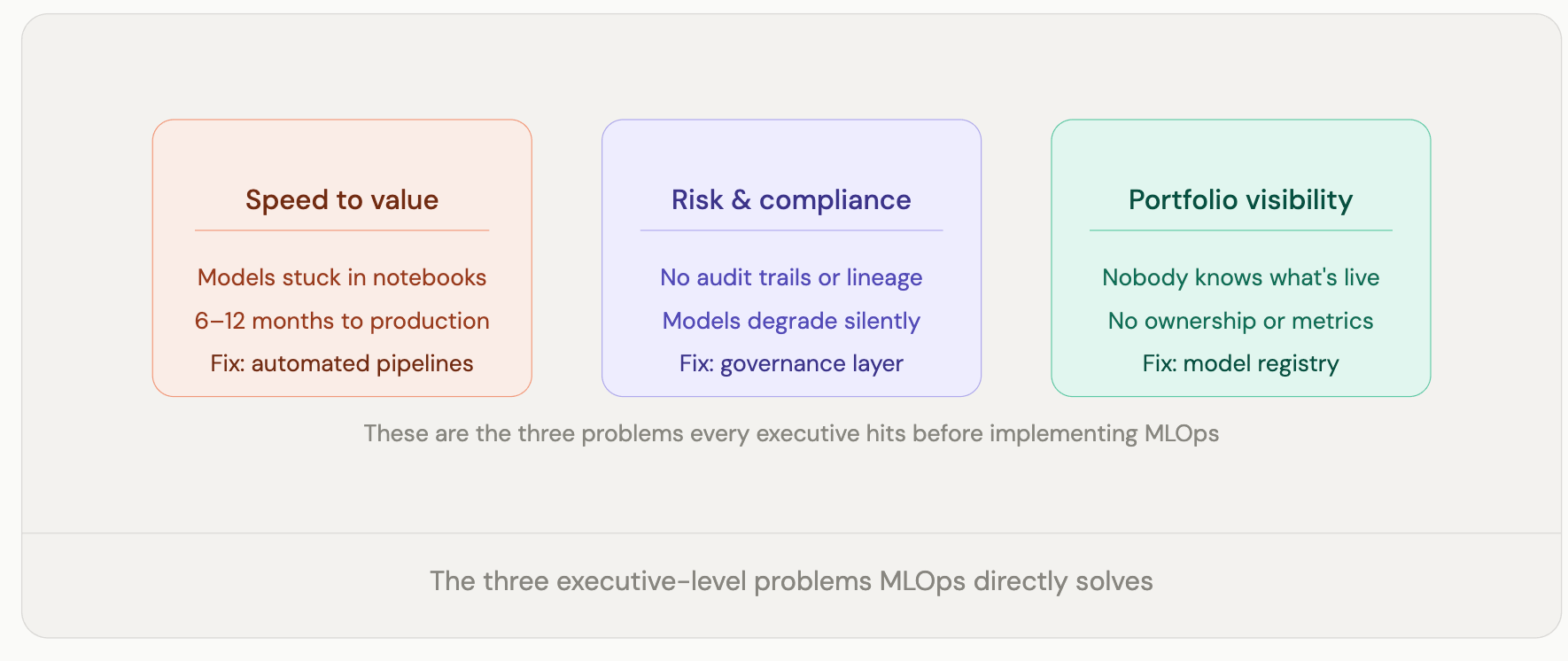

There’s also the prototype-to-production problem, which is separate and equally costly. An MLE can build a model that performs beautifully in a notebook. Keeping it accurate, governed, and trustworthy for the two or three years it runs in production is the hard part. Without MLOps, that transition is slow, error-prone, and dependent on individual effort that doesn’t scale and doesn’t survive when someone leaves the team.

Every model running in production without monitoring is a reputational risk that hasn’t materialized yet. Every model deployed without governance is a compliance exposure waiting to be triggered. Every data scientist spending half their week on manual deployment work is talent being wasted on a problem MLOps is specifically designed to solve.

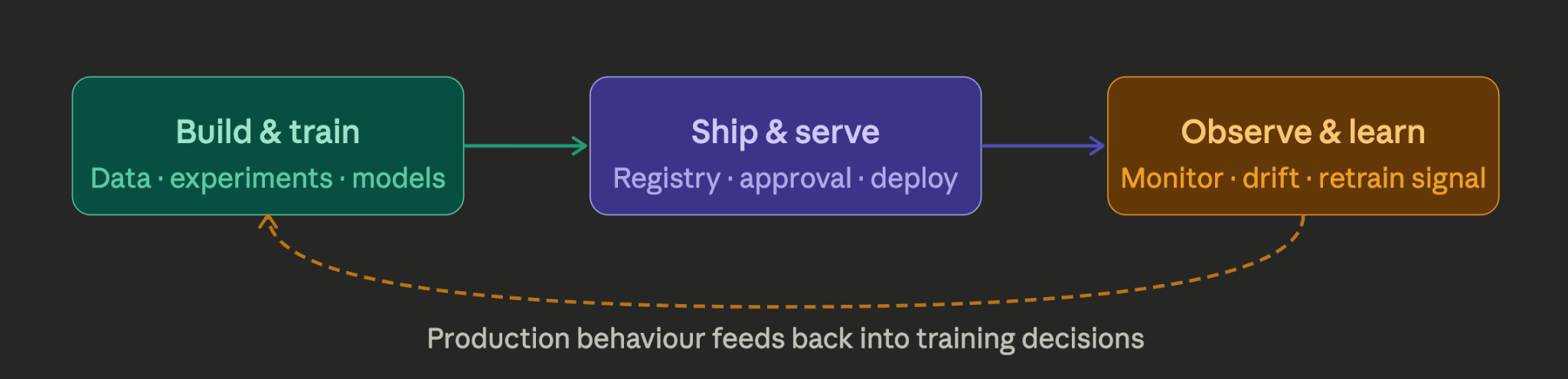

Machine learning doesn’t follow a straight line from data to deployment. It’s a loop. Production behavior feeds back into training decisions, better models change what production looks like, and monitoring closes the gap between the two. MLOps is the infrastructure that keeps that loop running, so each handoff between build, ship, and observe happens reliably rather than breaking down and depending on someone to manually pick up the pieces.

If your organization already runs mature DevOps, you have a head start, but not as big a one as most engineering teams assume. The instincts carry over: automate what can be automated, version everything, test before you ship, and watch what happens after. All of that still applies.

But here’s where it breaks down. When you ship software, the code sits there and does its job. It does not change on its own. A machine learning model does not work that way. You can put a model into production, not touch it for six months, and it quietly gets worse. Not because anything broke, but because the world it is running on changed, and nobody noticed. User behavior shifted. New patterns emerged that the training data never captured. The model was never told. That kind of failure does not happen with normal software. Your DevOps tools are not looking for it.

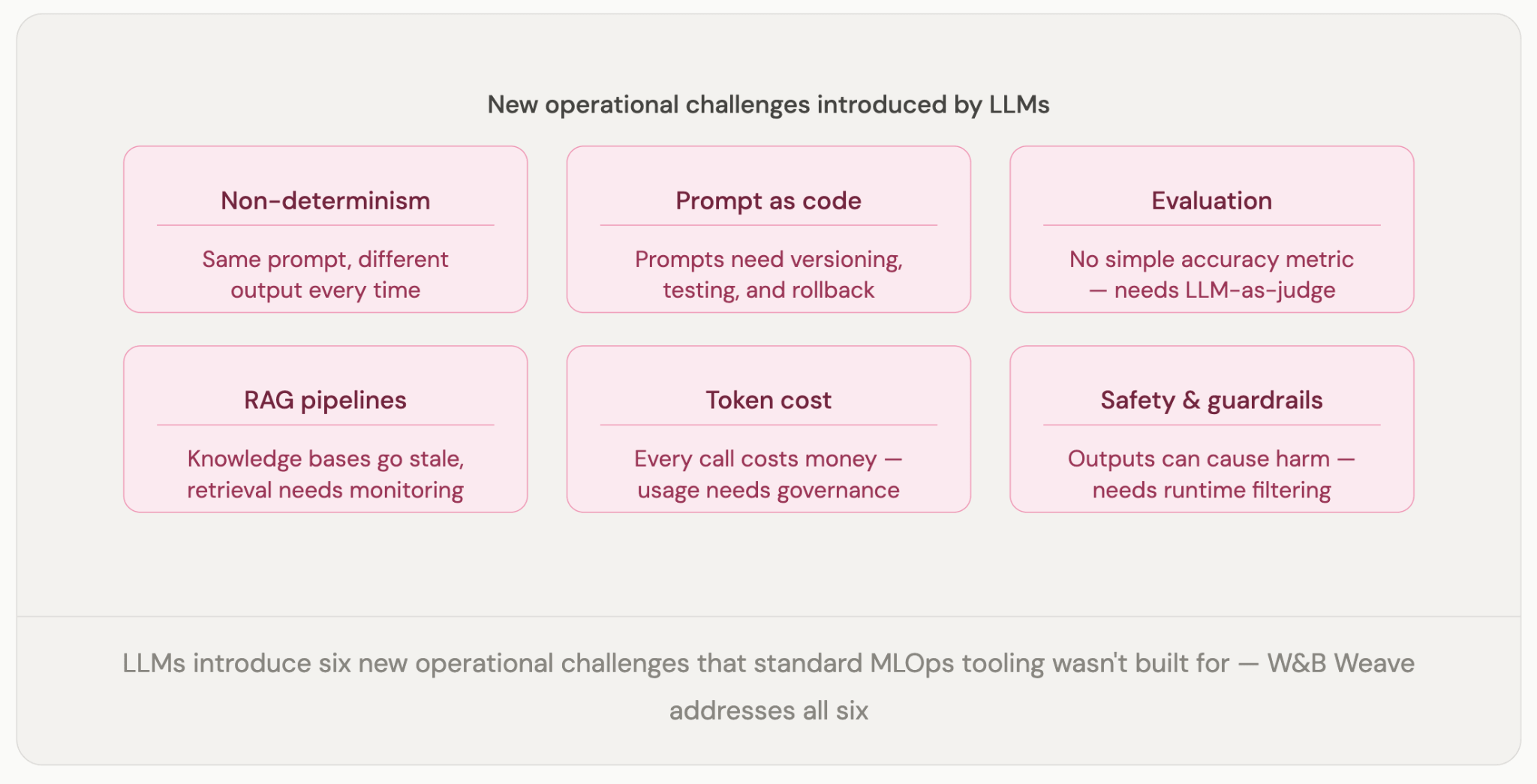

Large language models make it even more complicated. With an LLM, the prompt is part of the system. Change a word, and you can change the output entirely. There is often no clean right or wrong answer to check against. The information the model draws on gets outdated. Every inference costs money. None of that fits neatly into the monitoring and testing frameworks most teams already have in place.

You do not need a separate team for this. But you do need to think about it differently. The question is not whether your current tools can technically handle machine learning. Most can. The question is whether they were designed for it. They were not.

The most immediate payoff is that things stop failing silently. Versioned and monitored models fail less often, and when they do, teams find out in minutes rather than months. That alone changes the risk calculus around scaling an AI portfolio. An organization that knows when a model degrades can afford to run fifty of them. One that finds out through customer complaints cannot.

The efficiency case is straightforward once you have seen what manual machine learning deployment actually looks like. Engineers spending three weeks on deployment work every time a model ships is not the highest and best use of that talent. Automated pipelines give that time back, and the productivity gap between teams with them and those without compounds quickly.

Compliance is no longer a reason to defer MLOps. It is a reason to accelerate it. Regulators across financial services, healthcare, and insurance are asking, by name, for audit trails, lineage records, and evidence that models are monitored and governed. The EU AI Act’s enforcement window is open. Organizations that have the infrastructure in place will handle the first audit without disruption. Organizations that don’t will spend six months retrofitting it under pressure, which always costs more and always misses something.

There is also a leadership visibility problem that rarely gets named directly. Without MLOps, most executives have no reliable way to know how their models are actually performing. That is not a technical failure. It is a governance one.

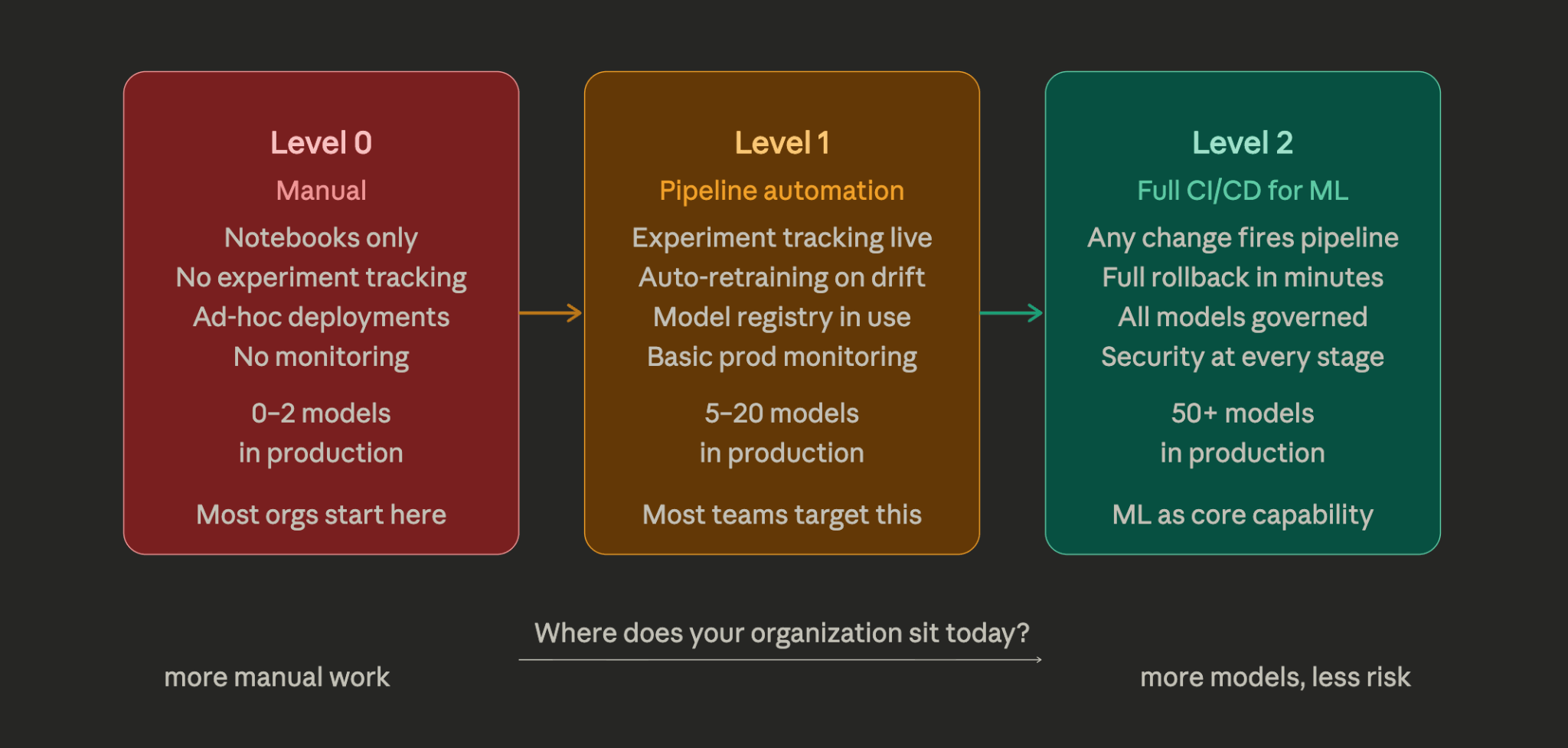

AWS’s MLOps framework defines three levels of automation maturity, and it’s become the most useful shorthand the industry has for diagnosing where an organization actually is versus where it thinks it is.

Most organizations are at Level 0 and think they’re at Level 1. The tell is usually the model registry, if it’s a shared folder or an S3 bucket with an informal naming convention, that’s Level 0 governance dressed up in Level 1 language.

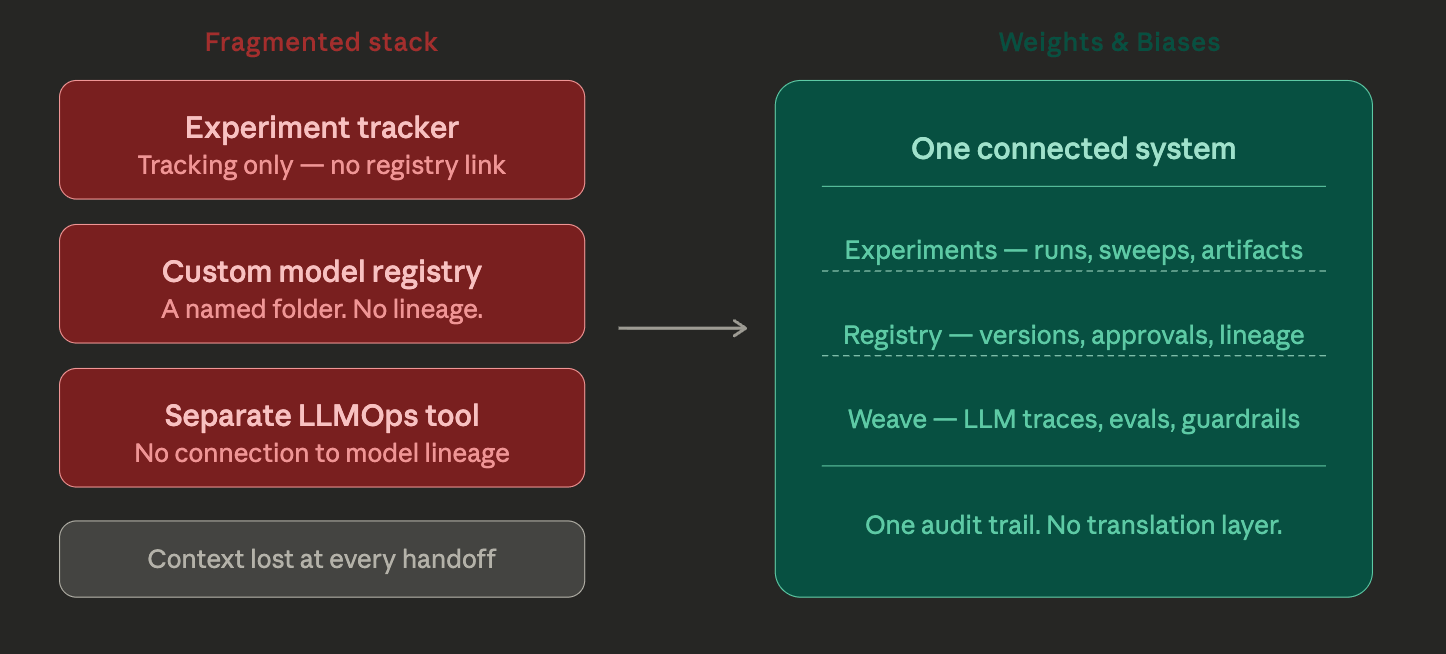

Most MLOps tool stacks are assembled from parts: an experiment tracker here, a model registry there, a separate monitoring solution, and another tool for LLMs. It works, technically. But the friction of moving data and context between disconnected systems is a real cost, and it tends to accumulate in the places that matter most: handoffs between experimentation and deployment, and between deployment and production observability.

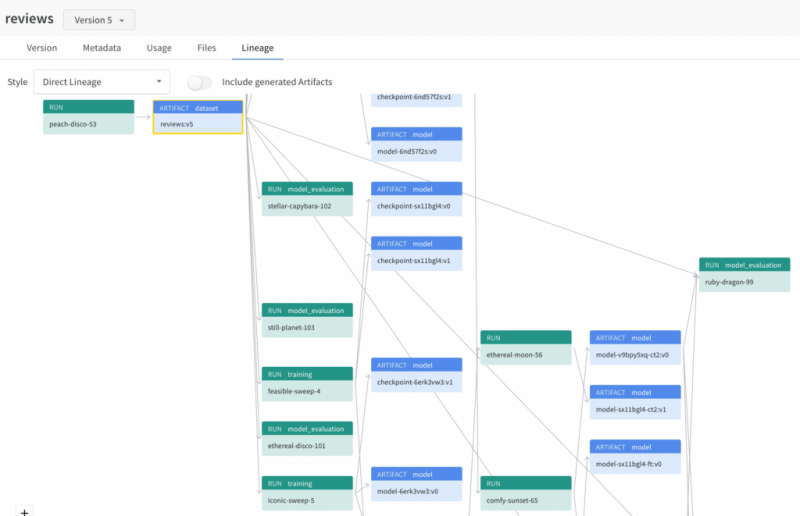

Weights & Biases was designed as a unified system for the full machine learning lifecycle, which means that what a machine learning engineer logs during experimentation is the same record the registry pulls from during approval, which is the same lineage the monitoring system references when an alert fires. No translation layer. No context lost between stages.

W&B Experiments tracks every training run: parameters, metrics, system stats, and artifacts automatically. W&B Registry adds versioning, approval workflows, and production aliases with full lineage back to the original experiment. W&B Weave extends the same model to LLMOps: tracing LLM calls, running structured evaluations, managing prompts, and monitoring production agents. One platform, one audit trail, from first notebook to production LLM.

ML expands your attack surface in ways that traditional security tooling wasn’t designed to catch. Application code has a well-understood threat model. ML adds three new layers that most security teams haven’t fully mapped: the data layer, where training datasets can be poisoned; the model layer, where weights can be stolen through repeated inference queries; and the serving layer, where carefully crafted inputs can flip model outputs in ways that are invisible to standard monitoring.

There’s also an infrastructure problem that’s less exotic but more common. Jupyter notebooks running with broad permissions are a well-documented entry point into production infrastructure. It’s an actively exploited pattern in ML environments, and it’s almost entirely an organizational failure rather than a technical one. The result of moving fast without applying the same access controls to data science infrastructure that you’d apply to everything else. MLOps governance closes that gap.

W&B addresses this through role-based access on the registry, audit logs on every artifact and model promotion, and approval gates that mean nothing reaches production without an explicit sign-off. Every action is recorded. Every version is traceable back to the dataset and training run that produced it.

For regulated industries, that traceability isn’t a nice-to-have. It’s what you show the auditor.

Most MLOps conversations in the boardroom go wrong because they start with tools. Someone in engineering needs a budget for a platform, IT has concerns about integration, a vendor gets invited to present, and the whole thing becomes a procurement decision. A procurement decision isn’t a strategy.

A real strategy starts with a different question:

What would it look like if our ML actually worked reliably at scale, and what’s standing between here and there?

That question almost never surfaces a tooling gap. It surfaces process gaps, ownership gaps, and accountability gaps. The data science team ships a model, and then nobody is quite sure who owns it in production. That’s not a problem any platform solves.

Think of it this way. One high-performing model is a project. The ability to deploy, monitor, and update fifty models across multiple teams, with confidence that each one is governed, each one will alert before it fails, and each one has a clear owner who’ll pick up the phone, that’s a capability. MLOps strategy means investing in the capability, not the project.

That ownership question, who is accountable for a model from training through to its behavior in production six months later, is the one most organizations haven’t answered clearly. Tooling can’t answer it for you. But once you’ve answered it, the tooling becomes much easier to choose.

MLOps won’t make your models smarter. That’s not the job. The job is making sure your models are still working six months after deployment, that the team knows when they aren’t, that there’s a clear owner for each one, and that updating them doesn’t require a massive engineering effort every time. That’s a lower bar than it sounds, and most organizations aren’t clearing it.

As LLMs and agents move from experiments into production, the stakes get higher, not lower. LLMOps is the same discipline applied to systems that are harder to evaluate, faster to degrade, and more expensive to operate. The organizations that build the MLOps muscle now will be the ones that can actually govern what comes next. The ones that skip it will still be building the foundations when everyone else is already operating at scale.