AI systems are evolving beyond single models handling tasks in isolation. Instead, intelligent multi-agent AI systems are emerging as a means to distribute work across multiple specialized agents, enabling collaboration, iterative refinement, and more dynamic decision-making. Rather than relying on a single model to generate and verify outputs independently, multi-agent systems introduce structured interactions where agents communicate, challenge assumptions, and improve results through collective reasoning.

This article will examine how multi-agent AI systems operate, their key distinctions from single-agent models, and the advantages they provide. We’ll also cover different types of multi-agent systems, real-world applications, and the challenges that come with building and deploying them.

What are agentic AI systems?

An AI agent is an intelligent system designed to achieve specific goals through planning, the use of external tools, retaining memory, and adapting over time. Unlike traditional automation, which follows rigid, predefined rules, AI agents dynamically process information, make decisions, and refine their approach based on the feedback they receive.

While chatbots primarily engage in conversations and require user input at every step, AI agents operate independently of user input. They don’t just generate responses; they take action, interact with external systems, and manage multi-step workflows without constant supervision.

Some key components of AI agents include:

- Tools: Connect to APIs, databases, and software to extend functionality.

- Memory: Store information across tasks to improve consistency and recall.

- Continual learning: Adapt and refine strategies based on past performance.

- Orchestration: Manage multi-step processes, break down tasks, and coordinate with other agents.

How multi-agent AI systems differ from single-agent systems

The core difference between single-agent and multi-agent AI systems is that a single-agent system operates under one “identity” – a single model processes input, generates output, and refines its own responses internally. It relies solely on its own mechanisms for reasoning and correction, without structured external feedback.

In contrast, multi-agent AI systems distribute work across multiple agents, each with a specialized role. These agents engage in structured interactions, questioning assumptions, verifying results, and iterating on solutions. Instead of a single perspective, they incorporate multiple viewpoints, reducing the risk of unchecked errors and improving adaptability.

Multi-agent systems can be:

- Fully autonomous, operating without human intervention.

- Human-guided, where humans oversee and direct their interactions.

- Hybrid, allowing AI agents to work independently but requiring human validation at key stages.

As AI continues to evolve, multi-agent frameworks are being explored as a promising approach for handling complex workflows, reasoning across multiple perspectives, and refining outputs through collaboration.

Key benefits of intelligent multi-agent AI systems

Multi-agent AI systems improve performance, efficiency, and adaptability by distributing tasks among specialized agents rather than relying on a single model to handle everything. This approach mirrors human teamwork, where breaking down complex projects into focused roles leads to better outcomes.

One major benefit is improved task decomposition. LLMs struggle with long, complex instructions, but dividing a problem into smaller, well-defined steps allows each agent to focus on a specific aspect. This leads to better execution, whether in software development, research, or content creation.

Another advantage is enhanced accuracy and reliability. When multiple agents verify, critique, or refine each other’s work, the chance of errors or hallucinations decreases. Systems like ChatDev and MetaGPT introduce structured communication methods, enabling agents to challenge assumptions, refine ideas, and generate higher-quality outputs.

Scalability is another key factor. A single AI model has limitations in terms of memory and processing capacity, but a network of agents can manage multiple tasks in parallel, making them more suitable for large-scale automation. Research automation, for example, benefits from AI-driven scientists generating hypotheses, running experiments, and analyzing results concurrently.

Multi-agent systems also allow for dynamic adaptability. Agents can recall past interactions, reflect on their own performance, and modify their approach based on feedback. This is particularly useful in applications where ongoing learning and refinement are needed, such as AI-driven software development or long-term project management.

Types of multi-agent AI systems

After diving into much of the latest research and attempting to build a multi-agent system myself, it seems that the largest differentiator between AI agent systems currently is the amount of human intervention involved in the system.

From my perspective, there are three main categories of multi-agent systems: autonomous, human-guided, and hybrid autonomous systems.

Note that these categories were created by me after research and experimentation. The world of agents is moving at a blistering pace, so these categories are subject to change and interpretation.

While full autonomy is often presented as the ultimate goal, I think it’s a bit of a gimmick right now. AI models struggle with consistency, long-term reasoning, long-term memory retrieval, and self-correction without human intervention. However, this could rapidly change as base models improve. For now, semi-autonomous approaches offer a more practical balance between automation and usability.

Autonomous multi-agent AI systems

These systems operate entirely without human intervention. AI agents generate ideas, critique each other, and refine their outputs iteratively. The system determines its own workflow, deciding what needs to be done and how to approach each step. These approaches are promising for large-scale research automation, but they still suffer from hallucinations, lack of true long-term memory, and difficulties with self-correction.

Some implementations focus on AI-driven research, where agents propose hypotheses, run code, analyze results, and refine conclusions. Others use adversarial setups where agents challenge each other’s findings to improve reliability. While these methods can accelerate discovery, they often produce ungrounded results or reinforce errors without human oversight.

Fully autonomous systems are still inconsistent in complex problem-solving, but as AI improves, they may be able to take on more aspects of research, engineering, and decision-making with minimal human intervention.

Human-guided multi-agent AI systems

These systems rely on humans to define and trigger each step, with AI agents executing specific tasks but not taking initiative beyond their assigned roles. Every phase is broken into discrete steps, with humans overseeing and initiating each one. Essentially, here, the AI functions as a set of highly capable tools rather than an independent research entity.

Humans predefine each step and activate AI agents to complete individual tasks. For example, a researcher might prompt an AI to generate a literature review, then instruct another agent to summarize findings, and later request an AI-driven analysis. AI does not determine the research flow, but rather executes predefined roles within a strict pipeline, where scientists manually assign tasks such as hypothesis generation, data processing, and result verification. AI agents operate as isolated specialists, handling tasks such as code generation or statistical analysis. However, each step requires human intervention before moving forward, ensuring full control over the process.

Hybrid autonomous AI systems

Hybrid systems offer AI greater flexibility while maintaining human oversight at key points. Rather than being restricted to predefined tasks, AI agents operate within broader roles, making independent decisions and performing multi-step actions before requiring human input. They can refine their own work, collaborate dynamically, and leverage tools before seeking validation.

Autonomous AI agents take multiple sequential actions before pausing for human review. An AI scientist might generate a hypothesis, analyze prior research, and design an experiment before requesting feedback. Instead of being confined to single tasks, agents function within open-ended roles, identifying research gaps, drafting questions, and suggesting methodologies to address them. AI also iterates with human guidance, refining models, adjusting parameters, and optimizing performance before further input.

This hybrid approach strikes a balance between automation and usability. AI can manage complex workflows, while human oversight ensures relevance, accuracy, and ethical considerations. Full autonomy remains a distant goal, but hybrid systems are already proving effective in real-world research.

Andrew Ng’s thoughts on multi-agent systems

Andrew Ng has acknowledged multi-agent collaboration as a powerful design pattern for AI systems, particularly for complex tasks like software development.

Instead of relying on a single LLM to handle everything, breaking tasks into specialized roles – such as software engineer, product manager, and QA tester – improves efficiency and accuracy. Even if all agents stem from the same LLM, prompting them separately allows for better task decomposition and execution.

One key advantage of this approach is that while modern LLMs can process large input contexts, their ability to understand and act on long, complex instructions remains inconsistent. By assigning focused roles, each agent can optimize its part of the task, resulting in improved overall performance. This mirrors real-world project management, where managers divide work among specialists rather than expecting a single person to do everything.

Ng points to emerging frameworks, such as AutoGen, CrewAI, LangGraph, and ChatDev, as examples of multi-agent systems in action. ChatDev, in particular, models a virtual software company where agents collaborate using structured workflows. While these systems aren’t always perfect, they often produce surprisingly effective results.

He also notes that multi-agent collaboration introduces unpredictability, especially when agents interact freely. More structured patterns, such as Reflection and Tool Use, tend to be more reliable. Despite these challenges, Ng sees multi-agent AI as a promising direction for improving complex problem-solving.

Multi-agent AI systems in the real world

From software development and scientific research to finance, healthcare, and customer service, multi-agent setups enable AI to work in structured teams, optimizing workflows and reducing errors. While challenges such as unpredictability and coordination persist, emerging frameworks demonstrate that multi-agent AI can enhance automation, decision-making, and problem-solving in real-world applications. This section explores various implementations and the impact of multi-agent AI across industries.

Research on multi-agent systems

Research on multi-agent AI explores how AI systems collaborate, adapt, and solve complex problems. While often studied in simulated environments, these systems have potential for real-world applications. This section covers key research in the field, from generative agents to AI-driven scientific discovery, highlighting how multi-agent AI can enhance automation, decision-making, and teamwork.

Generative agents: Interactive simulacra of human behavior

AI systems that simulate human behavior are often developed for entertainment or research purposes, but could they also be beneficial in real-world work environments?

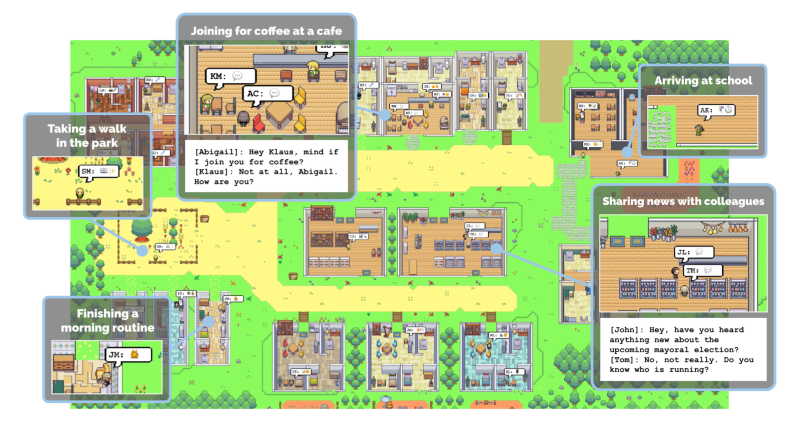

A recent study explored this question by creating generative agents – AI-powered characters capable of recalling past events, forming relationships, and making plans. While largely an exploratory effort focused on social behavior, the results offer insights for more productivity-oriented AI systems.

The researchers placed 25 agents in a simulated town where they lived out their daily routines. The agents ran businesses, conducted research, and even planned a Valentine’s Day party – entirely on their own. One agent decided to host the party, invited friends, and spread the word. Others coordinated decorations, asked each other on dates, and showed up – without explicit instructions. Meanwhile, agents worked as shopkeepers, students, and artists, sticking to schedules and responding dynamically when things changed.

What made this possible? Memory, reflection, and planning. Agents stored past experiences, recalled relevant details, and adjusted their actions accordingly.

Findings showed that memory retrieval failures led to inconsistencies, reflection helped agents form realistic long-term behavior, and planning allowed for multi-step work. Although this was a sandbox experiment, it suggests that similar AI architectures could be useful in workplace automation, collaborative AI teams, and long-term AI assistants that require memory and adaptability over time. AI that can recall, reflect, and plan isn’t just interactive; it might be truly useful.

The AI Scientist

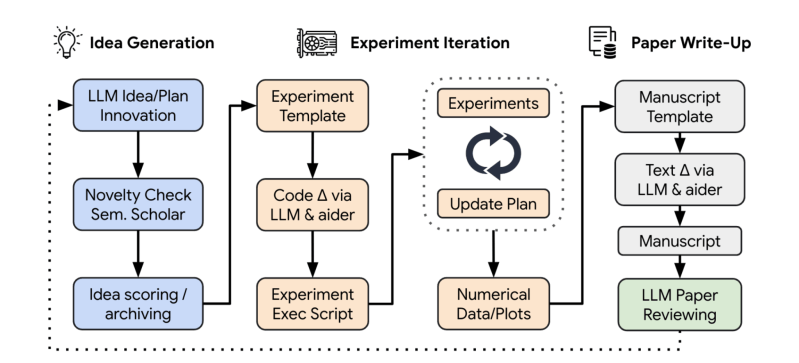

The AI Scientist is an early attempt at automating the research process, using AI to generate ideas, write code, run experiments, analyze results, and draft scientific papers. Given a broad research direction and a simple code template, it explores possible improvements, tests them, and documents its findings—all with minimal human input. It even evaluates its own work through an automated review system, mimicking aspects of peer review.

In one experiment, a new method for diffusion models was proposed, the code was modified to implement it, tests were run, and a full research paper detailing the results was written. While it occasionally made errors in reasoning or execution, it consistently produced papers at a cost of around $15 per study, demonstrating its ability to conduct independent research.

What makes this possible is its ability to remember, reflect, and iterate. It recalls past discoveries, revises flawed approaches, and refines its ideas over multiple attempts. Although still in development, this type of system could eventually assist human researchers by automating repetitive tasks, generating hypotheses, and accelerating scientific progress.

ChatDev

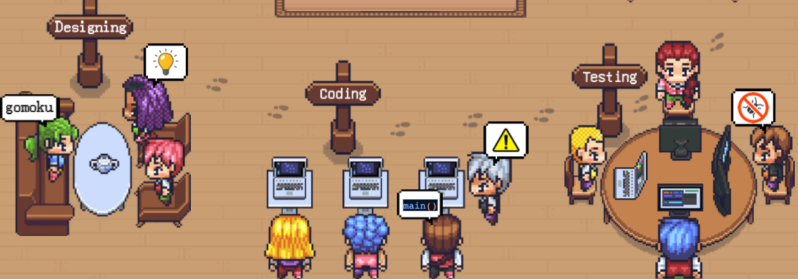

ChatDev is a software development framework that enables multiple LLM-powered agents to collaborate through structured conversations. Instead of handling software development in isolated phases, ChatDev employs a “chat chain” where specialized agents communicate in natural language and code to collaboratively design, develop, and test software.

Each agent has a defined role, such as CEO, CTO, programmer, reviewer, or tester. They interact through multi-turn dialogues, breaking the process into smaller subtasks. The design phase focuses on system planning in natural language, while the coding phase involves writing and refining code through iterative exchanges. The testing phase includes automated debugging, with agents providing structured feedback to identify and correct errors, thereby improving code quality.

To reduce coding hallucinations, where models generate incorrect or incomplete code ChatDev introduces “communicative dehallucination.” Before responding, agents proactively request clarifications, leading to more accurate outputs. Memory mechanisms manage short-term dialogue context and long-term project history to maintain coherence across development stages.

Compared to single-agent approaches like GPT-Engineer, ChatDev outperforms in completeness, executability, and consistency. While it requires more computational resources, its structured communication model results in higher-quality software. The approach highlights how linguistic coordination between LLM agents can drive automated, multi-agent software development.

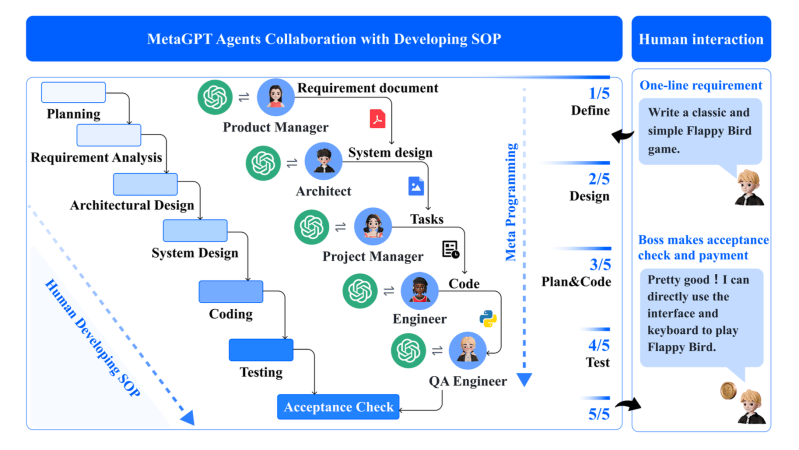

MetaGPT

MetaGPT is a multi-agent software development framework that follows Standardized Operating Procedures (SOPs) to structure collaboration between LLM-powered agents. Instead of relying on unstructured dialogue, as in ChatDev, agents in MetaGPT communicate through documents and diagrams. These structured outputs ensure that all necessary information is retained, reducing errors caused by irrelevant or missing content.

Each agent in MetaGPT has a specialized role, such as Product Manager, Architect, Engineer, or QA. The framework organizes tasks into a structured workflow, where agents follow predefined procedures to analyze requirements, design systems, generate code, and test implementations. A shared message pool allows agents to publish and subscribe to relevant updates, ensuring efficient information flow without unnecessary exchanges.

MetaGPT also introduces an “executable feedback” mechanism. After generating code, agents run tests and refine their outputs based on real execution results. This iterative debugging process reduces hallucinations and improves software reliability without requiring human intervention.

By enforcing structured communication and integrating real-time code testing, MetaGPT produces more accurate and coherent software solutions while minimizing inefficiencies in multi-agent collaboration.

Enhancing diagnostic accuracy through multi-agent conversations

Multi-agent AI systems are being explored as a way to improve diagnostic accuracy in medicine, particularly by addressing cognitive biases that can lead to misdiagnoses. Recent research tested an AI-driven multi-agent conversation framework in a clinical setting, where LLM-powered agents simulated the decision-making process of a medical team. Each agent played a specific role—one proposed an initial diagnosis, another acted as a critical challenger to question assumptions, a third facilitated discussion to prevent premature conclusions, and a fourth summarized findings.

The study evaluated 16 clinical cases where human doctors had previously made diagnostic errors due to cognitive biases. In 80 simulated diagnostic scenarios, the AI agents initially made incorrect diagnoses in every case. However, after multi-agent discussion, the accuracy of the top differential diagnosis increased to 71.3%, and the accuracy for the final two differential diagnoses reached 80.0%. These results suggest that multi-agent AI discussions could help refine medical decision-making, particularly in complex cases where initial diagnoses are misleading.

While still in the research phase, this approach shows promise for assisting clinicians by providing a structured, multi-perspective analysis of patient cases. Future developments could integrate these AI agents into electronic medical records, providing real-time diagnostic support and enabling doctors to recognize and correct biases before they impact patient outcomes.

How Moody’s is using multi-agent AI systems

Moody’s, a leading provider of financial analysis and credit ratings, assesses risk for businesses, governments, and investors worldwide. To enhance its research and evaluation processes, Moody’s is using multi-agent AI systems to automate financial research, credit risk assessment, and market analysis. Their system comprises a network of AI agents assigned specific roles, such as retrieving financial reports, analyzing creditworthiness, identifying emerging risks, and generating insights for informed investment decisions. These agents collaborate by cross-verifying data, refining outputs, and improving the accuracy of financial evaluations.

A key aspect of Moody’s multi-agent approach is the use of LLMs as evaluators, where AI models assess the relevance and reliability of retrieved information. This helps ensure that the financial insights generated by AI agents align with industry standards and market realities. Additionally, Moody’s employs a mixture-of-experts framework, where different AI agents specialize in specific tasks—such as corporate bond analysis, ESG risk assessment, or regulatory compliance – allowing for more precise and well-rounded evaluations.

By leveraging multi-agent AI, Moody’s streamlines traditionally manual financial research processes, improving efficiency and consistency in credit ratings, risk analysis, and investment intelligence. This system enables more scalable and automated financial decision-making while reducing the human workload in data-intensive evaluations.

Multi-agent AI systems in customer service

Customer service is an ideal use case for multi-agent AI systems because it involves a mix of high-volume repetitive inquiries and complex information retrieval. Many customer interactions follow predictable patterns, such as tracking orders, processing refunds, or troubleshooting common issues, but still require pulling data from different sources and coordinating across multiple departments. Multi-agent systems are well-suited for this environment because they enable different agents to specialize in distinct tasks while collaborating to deliver seamless support.

Instead of relying on a single chatbot to handle everything, a multi-agent system breaks customer service into specialized roles. For example, in e-commerce and logistics, a system might include:

- A customer inquiry agent that manages initial interactions and routes requests.

- A payment processing agent that handles billing issues and transaction verification.

- A shipping agent that retrieves real-time tracking updates and resolves delivery problems.

- A returns & refunds agent that checks eligibility, processes returns, and updates inventory.

Each department can focus on developing and refining its own agent, making the system more scalable than a single-agent approach. Instead of building one AI model that must handle every type of customer request, companies can improve individual agents separately, upgrading the shipping agent with better tracking integrations or enhancing the payment processing agent to detect fraudulent transactions more effectively. This modular approach also makes maintenance easier, as specific agents can be updated or replaced without disrupting the entire system.

For more advanced setups, AI-powered escalation agents monitor frustration levels and determine when a human should intervene. Some companies also deploy AI supervisor agents that oversee multiple interactions, optimize workflows, and detect inefficiencies in real time.

By leveraging multi-agent AI, customer service operations can automate routine tasks, retrieve information more efficiently, and scale AI development across departments. This not only improves response times and accuracy but also allows human agents to focus on complex cases, enhancing overall customer satisfaction.

Conclusion

Multi-agent AI systems represent a shift in how artificial intelligence is applied to complex tasks. Rather than relying on a single model to manage every aspect of a process, these systems distribute responsibilities across specialized agents, allowing for greater efficiency, accuracy, and scalability. While challenges remain, particularly in areas such as self-correction, long-term memory, and managing unpredictability, continued advancements in AI coordination strategies are making these systems more practical and reliable.

The real value of multi-agent AI will stem from its ability to integrate seamlessly into real-world workflows, whether in research, software development, customer service, or other applications. Fully autonomous systems may still be limited in their effectiveness, but hybrid approaches that balance AI autonomy with human oversight are already proving useful. As these systems evolve, they could redefine how we approach automation, enabling AI to function not just as a tool but as a collaborative partner in problem-solving and innovation.