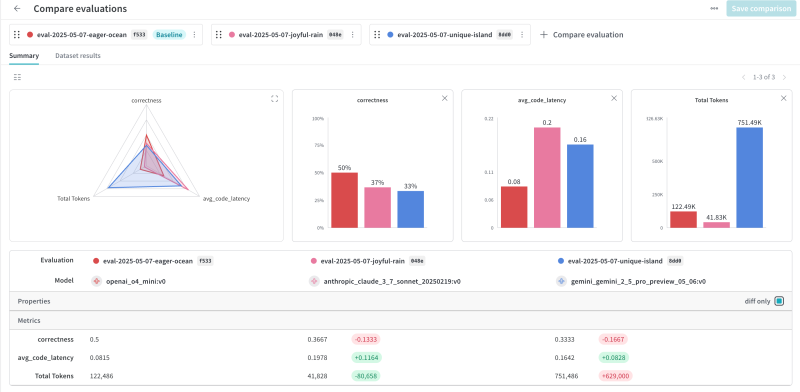

For more information or if you need help retrieving your data, please contact Weights & Biases Customer Support at support@wandb.com

You’ve built an AI agent that can search databases, call APIs, and draft customer emails. In the demo, it looks impressive. In production, it tells a user their refund is approved when it isn’t, leaks internal pricing to a competitor, and tries to execute a SQL query that would drop a table.

AI agents are useful because they act autonomously. They’re also risky for the same reason. When you give an LLM a loop and tools, you get a system that can do real things in real environments, including things you didn’t intend.

The gap between “works in testing” and “safe in production” is where AI guardrails come in. This article covers what guardrails actually are, how they fit into agent architectures, and how to implement them with scorers and monitors.

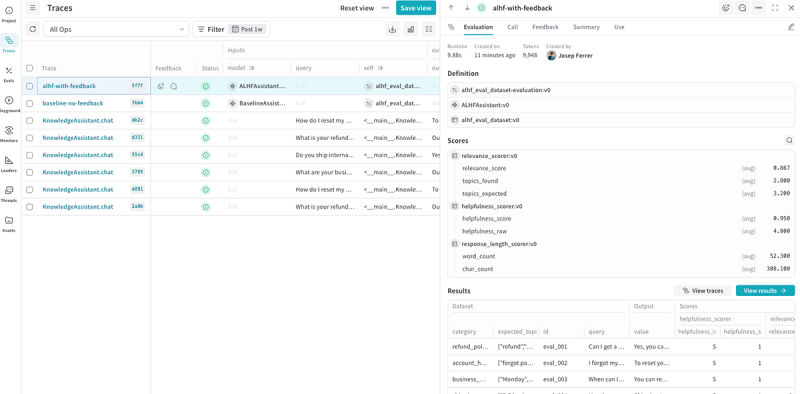

We’ll also walk through a practical setup using W&B Weave, including runtime checks, trust scoring, and human-in-the-loop patterns.

Guardrails for AI agents are constraints that keep an agent operating within acceptable bounds. They translate organizational policies, such as what the agent can see, decide, and do, into technical checks that run before, during, or after the agent acts.

The goal isn’t to make the agent dumber. It’s to make it predictable. An agent without guardrails might occasionally produce brilliant results, but it will also occasionally hallucinate financial advice, expose PII, or execute actions that require human approval. Guardrails let you keep the useful autonomy while cutting off the dangerous edge cases.

In practice, guardrails cover several categories:

These aren’t just nice-to-haves for regulated industries. Any agent that interacts with users or business systems needs them. The question is whether you design them intentionally or discover their absence through incidents.

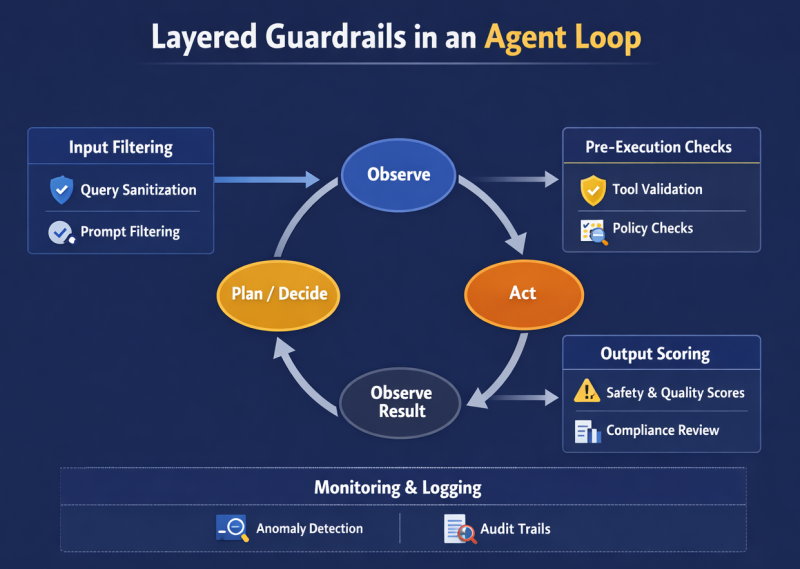

A typical AI agent runs in a loop: observe context, decide on an action, execute the action, observe the result, repeat. Guardrails plug into multiple points in this cycle.

This layered approach matters because no single check catches everything. A prompt-injection filter might miss a novel attack, but an output-toxicity scorer will still flag the resulting harmful content.

In agent frameworks, guardrails typically live in a few places:

The combination of proactive blocking and passive monitoring gives you both immediate protection and the data to improve policies over time.

Policy-level guardrails define acceptable behavior in human-readable terms. These come from legal requirements, compliance obligations, and organizational values.

For a customer support agent, policy-level guardrails might include:

These rules should be documented before you write any code. They’re the source of truth that configuration and runtime checks implement.

The process typically involves legal, compliance, security, and product teams together. Skipping this step leads to guardrails that protect against the wrong things or miss critical risks entirely.

Configuration guardrails translate policies into technical settings that constrain the agent structurally.

Configuration guardrails prevent entire categories of problems before runtime. An agent without database write access can’t drop tables, regardless of what instructions it receives.

Runtime guardrails evaluate agent behavior as it happens. They use scorers, which are functions that rate inputs or outputs against specific criteria, to decide whether to proceed, modify, or block.

Common scorer categories:

Scorers run on every relevant operation. When a score falls below the threshold, the system can:

The key insight is that scorers can serve two purposes. As guardrails, they actively block or modify unsafe content in real-time. As monitors, they passively track quality metrics over time without affecting the response.

Runtime monitoring watches the agent as it operates. Every input, every tool call, every output gets logged and scored. This serves two purposes: catching problems in real-time and building a dataset for improving policies later.

Effective runtime monitoring tracks several dimensions:

When metrics drift outside normal ranges, the system should alert. A groundedness score that drops from 0.95 to 0.80 across a week might indicate a data source problem or a prompt regression.

For enforcement, runtime checks need to be fast enough not to crater latency. Simple classifiers and embedding comparisons work well. Heavy-weight checks like full LLM-as-judge evaluation might run asynchronously on a sample of traffic rather than blocking every request.

Some decisions shouldn’t be automated at all. Human-in-the-loop (HITL) patterns route high-stakes actions to humans for approval.

The trigger is usually a combination of:

For example, a financial services agent might:

The escalation path matters as much as the threshold. A request that sits in a queue for 24 hours defeats the purpose of automation. Good HITL systems have clear SLAs, routing to available reviewers, and fallback behaviors when no human is available.

HITL isn’t just about blocking risky actions. It’s also a feedback mechanism. When humans approve or reject agent proposals, that data improves future policy decisions and scorer calibration.

Scorers turn qualitative notions of “safe” and “good” into numbers you can track and threshold on.

Trust scores aggregate multiple signals into a single indicator of output reliability. A trust score might combine:

Risk scores flag potential problems. They might cover:

The key is making these scores actionable. A hallucination score of 0.73 is useless unless you know what threshold triggers intervention and what intervention to take.

Good scorer design follows a few principles:

Inside a well-structured agent, guardrails and scorers form a feedback loop around every decision.

Here’s the typical flow when a user sends a query:

This isn’t overhead you add later. It’s how the agent should work from the start. Retrofitting guardrails onto an existing agent is much harder than designing with them.

Building guardrails sounds straightforward in theory. In practice, teams repeatedly run into the same problems. The tricky part isn’t implementing the checks; it’s getting the balance right between safety and usability, and keeping the system maintained over time.

After watching teams build guardrail systems, some that worked well, others that became maintenance nightmares, a few patterns emerge. None of these are surprising, but they’re easy to skip when you’re racing to ship.

The shift from passive chatbots to active agents is a shift in liability. A chatbot that hallucinates is embarrassing; an agent that executes a bad SQL query is destructive.

Building guardrails isn’t just about preventing disasters—it’s about unlocking utility. You cannot confidently deploy an agent to handle refunds, manage calendar invites, or query internal databases until you can prove it stays within the lines.

By implementing the three-layer approach—policy for the rules, configuration for the structure, and runtime scorers for the execution—you create a system that is robust enough for the real world. This “defense in depth” turns a fragile demo into a production-grade application where humans can trust the machine to act on their behalf.

The architecture described here provides the blueprint. The next step is translating these patterns into code, setting up the actual scorers, and connecting the feedback loops that keep them accurate.

Ready to build this? In this tutorial, we walk through the practical implementation of these concepts using Weights & Biases Weave, including code samples for setting up trust scorers and human-in-the-loop workflows.