For more information or if you need help retrieving your data, please contact Weights & Biases Customer Support at support@wandb.com

It feels like the release of OpenAI’s ChatGPT has opened Pandora’s box of large language models (LLMs) in production. Not only does your neighbor now bother you with small talk about artificial intelligence, but the machine learning (ML) community is talking about yet another new term: “LLMOps.”

LLMs are changing the way we build and maintain AI-powered products. This will lead to new sets of tools and best practices for the lifecycle of LLM-powered applications.

This article will first explain the newly emerged term “LLMOps” and its background. We’ll discuss how building AI products is different with LLMs than with classical ML models. And based on these differences, we’ll look at the how MLOps varies from LLMOps. Finally, we’ll discuss what developments we can expect in the LLMOps space in the near future.

The term LLMOps stands for Large Language Model Operations. The short definition is that LLMOps is MLOps for LLMs. That means that LLMOps is, essentially, a new set of tools and best practices to manage the lifecycle of LLM-powered applications, including development, deployment, and maintenance.

When we say that “LLMOps is MLOps for LLMs”, we need to define the terms LLMs and MLOps first:

With that out of the way, let’s dig in a bit further.

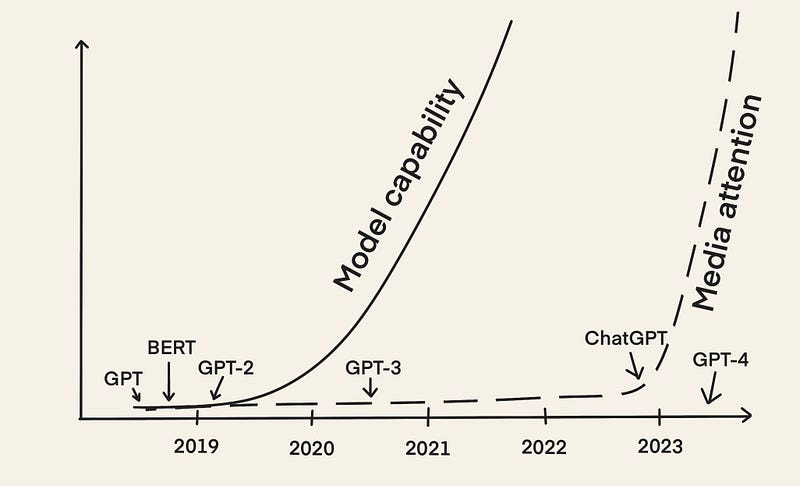

Early LLMs like BERT and GPT-2 have been around since 2018. Yet we are just now - almost five years later - experiencing a meteoric rise of the idea of LLMOps. The main reason is that LLMs gained much media attention with the release of ChatGPT in December 2022.

Since then, we have seen many different applications leveraging the power of LLMs, such as:

With many people developing and bringing LLM-powered applications to production, people are sharing their experiences:

“It’s easy to make something cool with LLMs, but very hard to make something production-ready with them.” - Chip Huyen [2]

It’s become clear that building production-ready LLM-powered applications comes with its own set of challenges, different from building AI products with classical ML models. To tackle these challenges, we need to develop new tools and best practices to manage the LLM application lifecycle. Thus, we see an increased use of the term “LLMOps.”

The steps involved in LLMOps are in some ways similar to MLOps. However, the steps of building an LLM-powered application differ due to the emergence of foundation models. Instead of training LLMs from scratch, the focus lies on adapting pre-trained LLMs to downstream tasks.

Already over a year ago, Andrej Karpathy [3] described how the process of building AI products will change in the future:

But the most important trend […] is that the whole setting of training a neural network from scratch on some target task […] is quickly becoming outdated due to finetuning, especially with the emergence of foundation models like GPT. These foundation models are trained by only a few institutions with substantial computing resources, and most applications are achieved via lightweight finetuning of part of the network, prompt engineering, or an optional step of data or model distillation into smaller, special-purpose inference networks. […] - Andrej Karpathy [3]

This quote may be overwhelming the first time you read it. But it summarizes everything that has been going on precisely, so let’s unpack it step by step in the following subsections.

Foundation models are LLMs pre-trained on large amounts of data that can be used for a wide range of downstream tasks. Because training a foundation model from scratch is complicated, time-consuming, and extremely expensive, only a few institutions have the required training resources [3].

Just to put it into perspective: According to a study from Lambda Labs in 2020, training OpenAI’s GPT-3 (with 175 billion parameters) would require 355 years and $4.6 million using a Tesla V100 cloud instance.

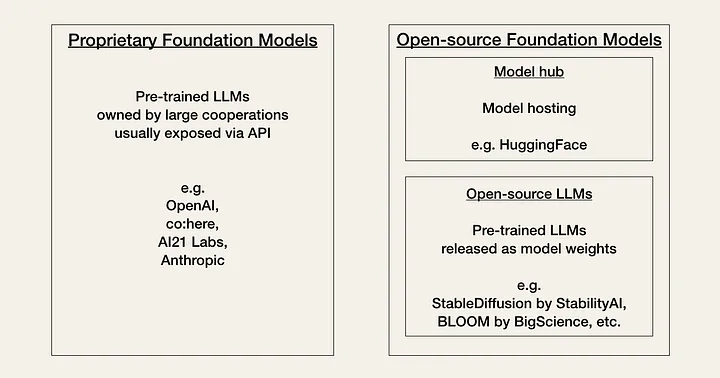

AI is currently going through what the community is calling its “Linux moment”. Currently, developers have to choose between two types of foundation models based on a trade-off between performance, cost, ease of use, and flexibility: Proprietary models or open-source models.

Proprietary models are closed-source foundation models owned by companies with large expert teams and big AI budgets. They usually are larger than open-source models and have better performance. They are also off-the-shelf and generally rather easy to use.

The main downside of proprietary models is their expensive APIs (application programming interfaces). Additionally, closed-source foundation models offer less or no flexibility for adaption for developers.

Examples of proprietary model providers are:

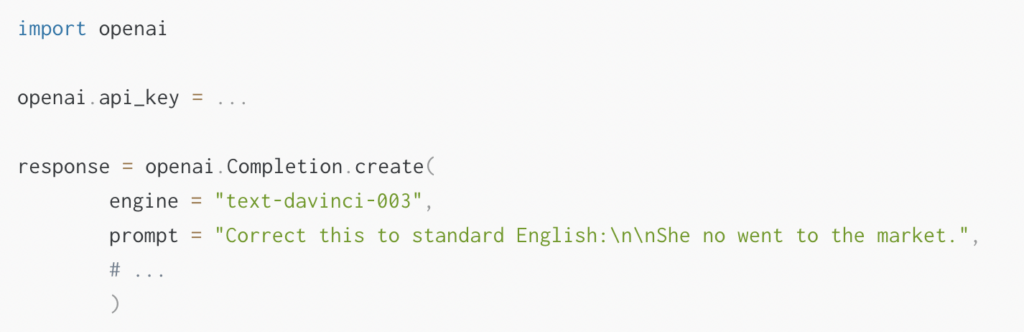

Once you have chosen your foundation model, you can access the LLM through its API. If you are used to working with other APIs, working with LLM APIs will initially feel a little strange because it is not always clear what input will cause what output beforehand. Given any text prompt, the API will return a text completion, attempting to match your pattern.

Here is an example of how you would use the OpenAI API. You give the API input as a prompt, e.g., prompt = “Correct this to standard English:\n\nShe no went to the market.”.

The API will output a response containing the completion response [‘choices’][0][‘text’] = “She did not go to the market.”

The main challenge is that LLMs aren’t almighty despite being powerful and thus, the key question is: How do you get an LLM to give the output you want?

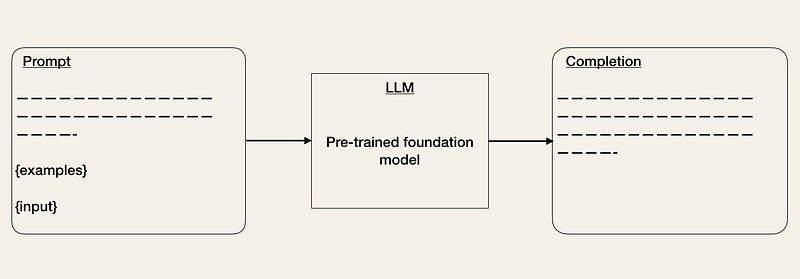

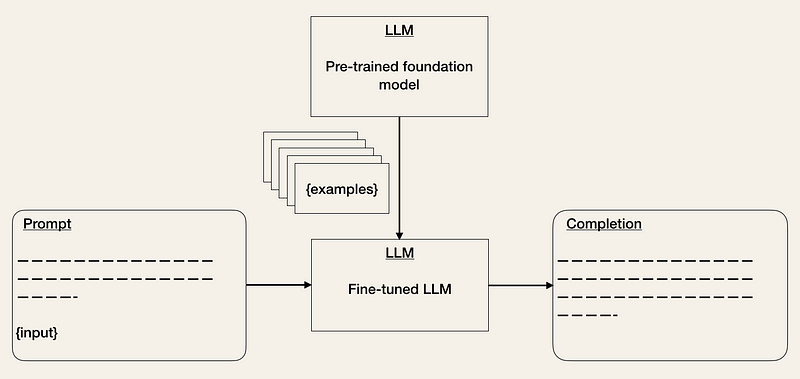

One concern respondents mentioned in the LLM in production survey [4] was model accuracy and hallucinations. That means getting the output from the LLM API in your desired format might take some iterations, and also, LLMs can hallucinate if they don’t have the required specific knowledge. To combat these concerns, you can adapt the foundation models to downstream tasks in the following ways:

External data: Foundation models often lack contextual information (e.g., access to some specific documents or emails) and can become outdated quickly (e.g., GPT-4 was trained on data before September 2021). Because LLMs can hallucinate if they don’t have sufficient information, we need to be able to give them access to relevant external data. There are already tools, such as LlamaIndex (GPT Index), LangChain, or DUST, available that can act as central interfaces to connect (“chaining”) LLMs to other agents and external data [1].

Embeddings: Another way is to extract information in the form of embeddings from LLM APIs (e.g., movie summaries or product descriptions) and build applications on top of them (e.g., search, comparison, or recommendations). If np.array is not sufficient to store your embeddings for long-term memory, you can use vector databases such as Pinecone, Weaviate, or Milvus [1].

Alternatives: As this field is rapidly evolving, there are many more ways LLMs can be leveraged in AI products. Some examples are instruction tuning/prompt tuning and model distillation [2, 3].

In classical MLOps, ML models are validated on a hold-out validation set [5] with a metric that indicates the models’ performance. But how do you evaluate the performance of an LLM? How do you decide whether a response is good or bad? Currently, it seems like organizations are A/B testing their models [5].

To help evaluate LLMs, tools like HoneyHive or HumanLoop have emerged.

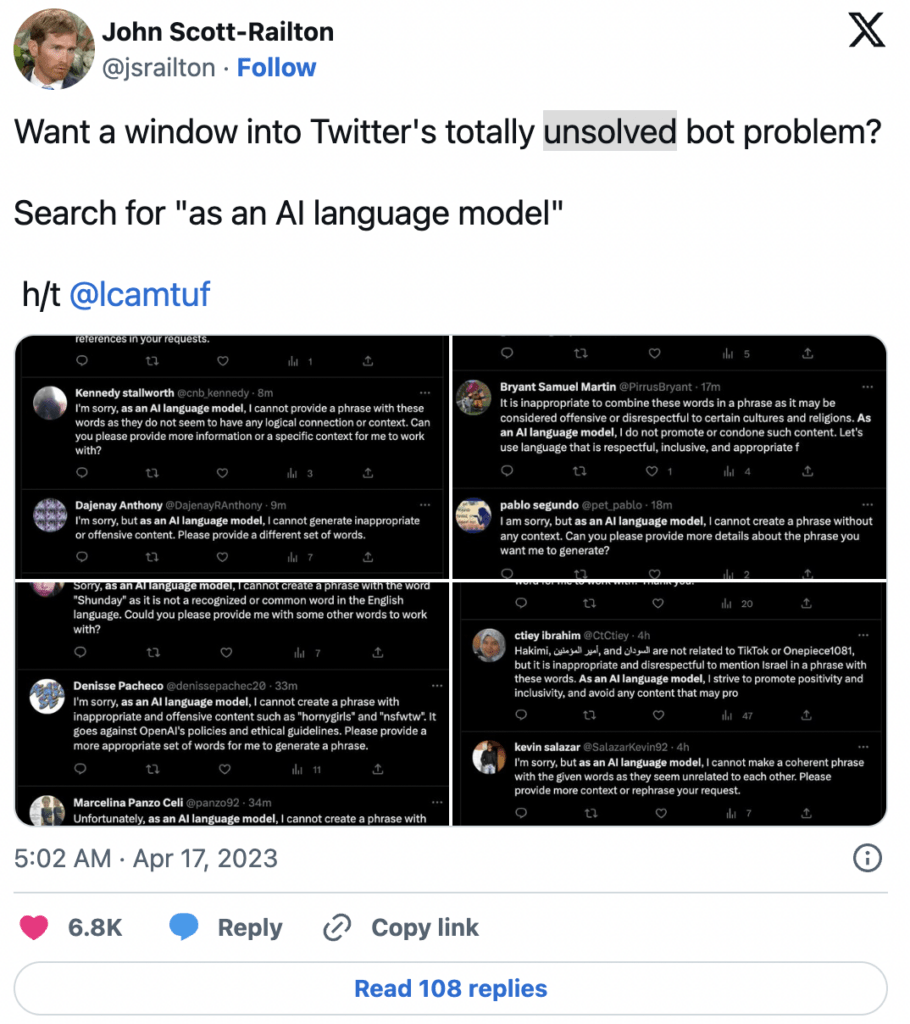

The completions of LLMs can drastically change between releases [2]. For example, OpenAI has updated its models to mitigate inappropriate content generation e.g., hate speech. As a result, searching for the phrase “as an AI language model” on Twitter now reveals countless bots.

The differences between MLOps and LLMOps are caused by the differences in how we build AI products with classical ML models versus LLMs. The differences mainly affect data management, experimentation, evaluation, cost, and latency.

In classical MLOps, we are used to data-hungry ML models. Training a neural network from scratch requires a lot of labeled data, and even fine-tuning a pre-trained model requires at least a few hundred samples. Although data cleaning is integral to the ML development process, we know and accept that large datasets have imperfections.

In LLMOps, fine-tuning is similar to MLOps. But prompt engineering is a zero-shot or few-shot learning setting. That means we have few but hand-picked samples [5].

In MLOps, experimentation looks similar whether you train a model from scratch or fine-tune a pre-trained one. In both cases, you will track inputs, such as model architecture, hyperparameters, and data augmentations, and outputs, such as metrics.

But in LLMOps, the question is whether to prompt engineer or to fine-tune [2, 5].

Although fine-tuning will look similar in LLMOps to MLOps, prompt engineering requires a different experimentation setup including management of prompts.

While the cost of traditional MLOps usually lies in data collection and model training, the cost of LLMOps lies in inference [2]. Although we can expect some costs from using expensive APIs during experimentation [5], Chip Huyen [2] shows that the cost of long prompts is in inference.

Another concern respondents mentioned in the LLM in production survey [4] was latency. The completion length of an LLM significantly affects latency [2]. Although latency concerns have to be considered in MLOps as well, they are much more prominent in LLMOps because this is a big issue for the experimentation velocity during development [5] and the user experience in production.

LLMOps is an emerging field. With the speed this space is evolving, making any predictions is difficult. It is even unsure if the term “LLMOps” is here to stay. We are only sure that we will see a lot of new use cases of LLMs and tools and best practices to manage the LLM lifecycle.

The field of AI is rapidly evolving, potentially making anything we write now outdated in a month. We’re still in the early stages of bringing LLM-powered applications to production. There are many questions we don’t have the answers to, and only time will tell how things will play out:

Since the release of OpenAI’s ChatGPT, LLMs are currently a hot topic in the field of AI. These deep learning models can generate outputs in human language, making them a powerful tool for tasks such as conversational AI, writing assistants, and programming assistants.

However, bringing LLM-powered applications to production presents its own set of challenges, which has led to the emergence of a new term, “LLMOps”. It refers to the set of tools and best practices used to manage the lifecycle of LLM-powered applications, including development, deployment, and maintenance.

LLMOps can be seen as a subcategory of MLOps. However, the steps involved in building an LLM-powered application differ from those in building applications with classical ML models.

Rather than training an LLM from scratch, the focus is on adapting pre-trained LLMs to downstream tasks. This involves selecting a foundation model, using LLMs in downstream tasks, evaluating them, and deploying and monitoring the model.

While LLMOps is still a relatively new field, it is expected to continue to develop and evolve as LLMs become more prevalent in the AI industry. Overall, the rise of LLMs and LLMOps represents a significant shift in building and maintaining AI-powered products.

[1] D. Hershey and D. Oppenheimer (2023). DevTools for language models - predicting the future (accessed April 14th, 2023)

[2] C. Huyen (2023). Building LLM applications for production (accessed April 16th, 2023)

[3] A. Karpathy (2022). Deep Neural Nets: 33 years ago and 33 years from now (accessed April 17th, 2023).

[4] MLOps Community (2023). LLM in production responses (accessed April 19th, 2023)

[5] S. Shankar (2023). Twitter Thread (accessed April 14th, 2023)