W&B Weave: Develop GenAI apps with confidence

Building demos of Generative AI applications can seem simple, but getting them production-ready—and ensuring they perform at scale—is a different challenge.

W&B Weave helps companies build, test, and refine AI applications with confidence. Run detailed evaluations, debug with ease, and deliver high-performing AI apps to production.

From W&B Weave users

Mike Maloney

Co-founder and CDO – Neuralift

“I love Weave for a number of reasons. The fact that I could just add a library to our code and all of a sudden I’ve got a whole bunch of information about the GenAI portion of our product, in Weights & Biases, which I was already using and very familiar with. All those things that I’m watching for the performance of our AI, I can just now report on quickly and easily with Weave.”

Georgina Corrie

Applied Scientist – UnlikelyAI

“I think Weave is just really simple, and what you get is a whole lot of summary metrics that you normally spend a while configuring yourself, and now you get everything recorded. It just make it so much quicker to debug what you’re doing and iterate quickly.”

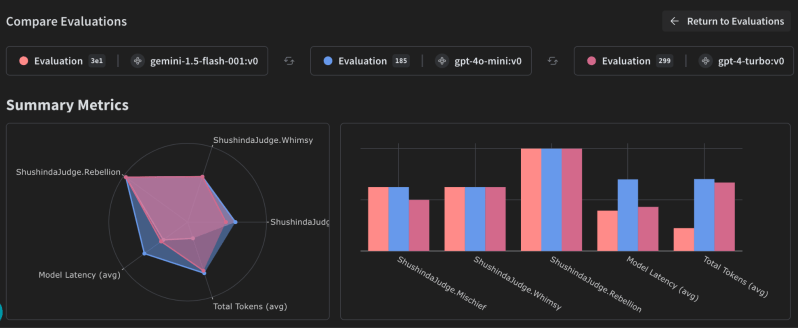

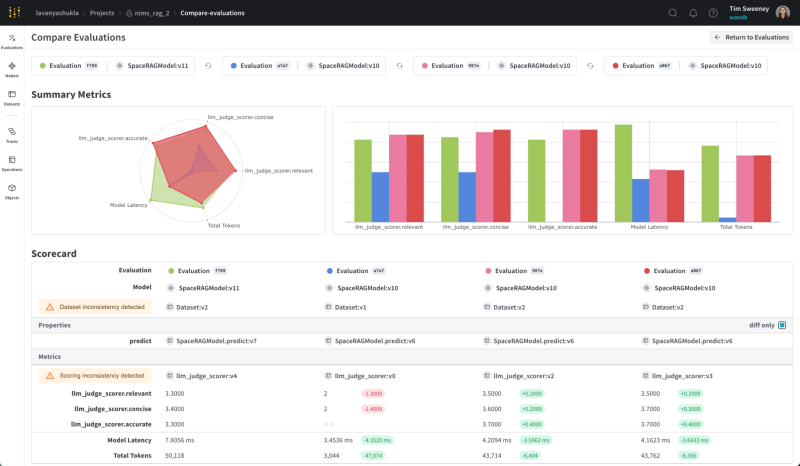

Evaluate and analyze the performance of LLMs

Compare different evaluations of model results against different dimensions of performance to ensure applications are as robust as possible when deploying to production. Drill into details to analyze exactly where and what it got it right and where it can improve. Review inputs and outputs of every call to your model to make tweaks and constantly produce richer outputs.

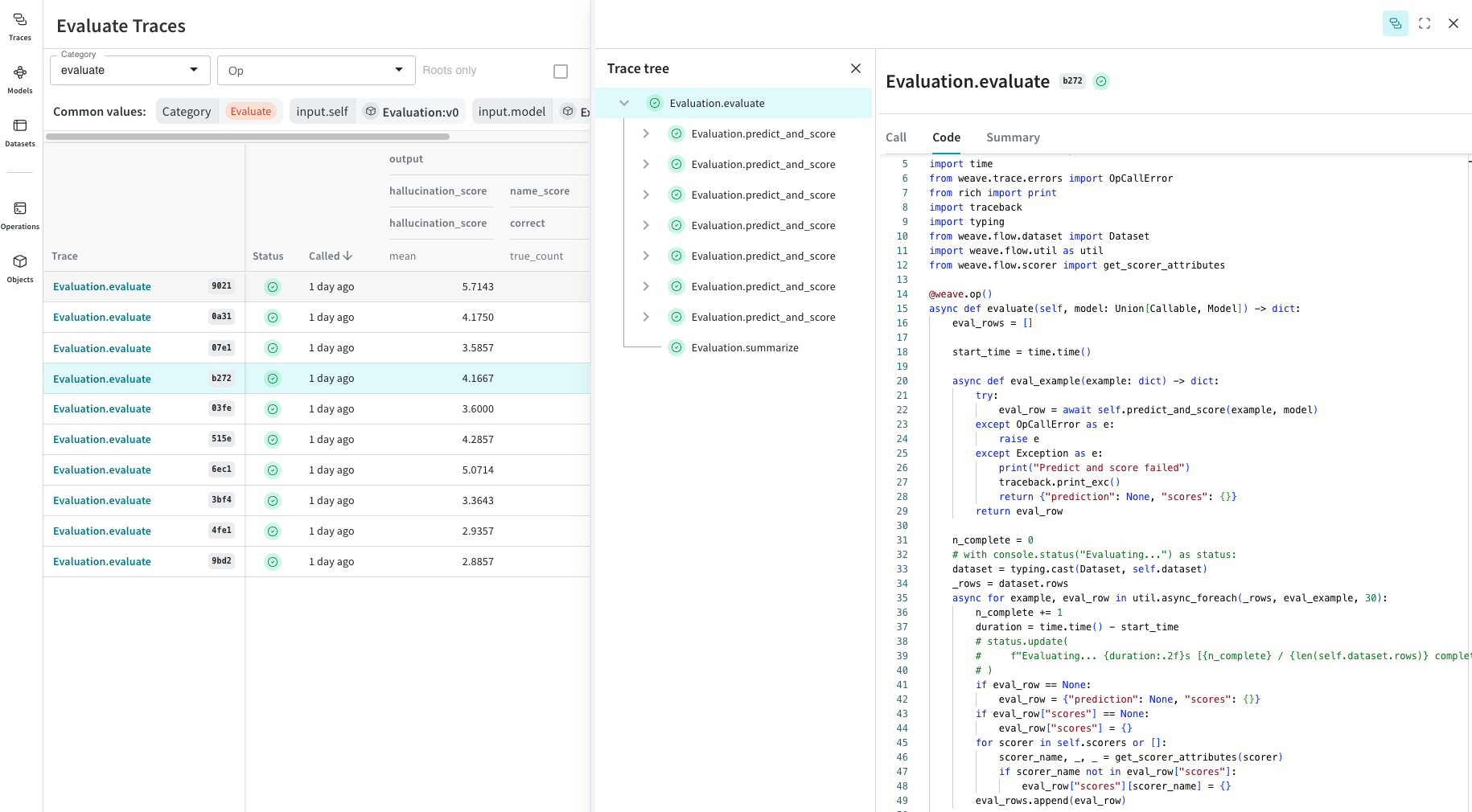

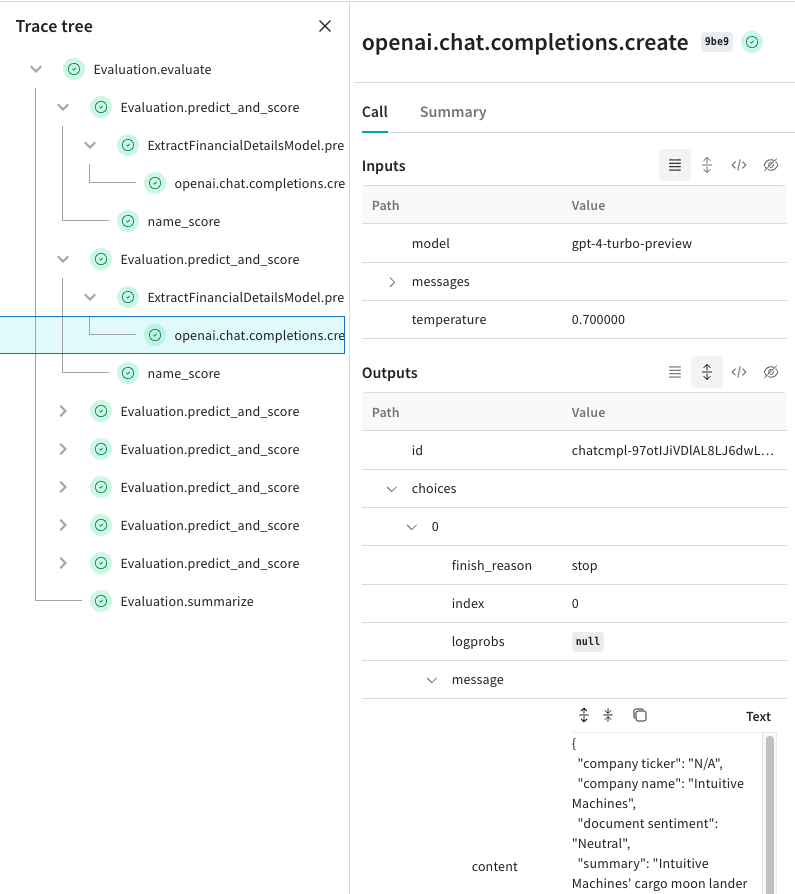

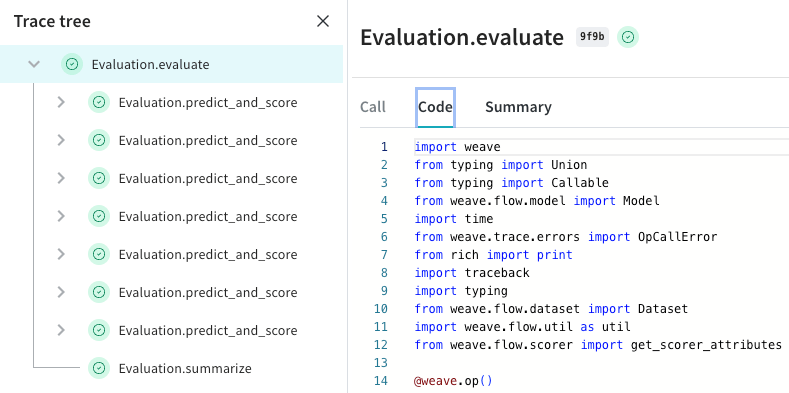

Log and debug inputs, outputs and traces

Weave automatically captures all input and output data and builds a tree to help you understand how data flows through your application. Drill into failure modes to find hallucinations or malformed responses, and analyze how different inputs affect document retrieval, tool use or any custom behaviors from your LLM application.

Built for today’s software developers

Engineers iterating on Generative AI apps require tools that are built for applying dynamic, non-deterministic models. Weave is designed with the developer experience in mind, providing capabilities for straightforward evaluation and debugging of GenAI applications.

Get started with W&B Weave

The Weights & Biases end-to-end AI developer platform

Weave

Models

The Weights & Biases platform helps you streamline your workflow from end to end

Models

Experiments

Track and visualize your ML experiments

Sweeps

Optimize your hyperparameters

Registry

Publish and share your ML models and datasets

Automations

Trigger workflows automatically

Weave

Traces

Explore and

debug LLMs

Evaluations

Rigorous evaluations of GenAI applications